Anders2013: Difference between revisions

| Line 938: | Line 938: | ||

* java based | * java based | ||

== DESeq for DE analysis == | == DESeq2 normalization == | ||

< | * https://support.bioconductor.org/p/66067/ | ||

* https://github.com/mgonzalezporta/TeachingMaterial/blob/master/doc/25.normalising.md | |||

* http://www.sthda.com/english/wiki/rna-sequencing-data-analysis-counting-normalization-and-differential-expression | |||

* [https://genomebiology.biomedcentral.com/articles/10.1186/s13059-014-0550-8 Moderated estimation of fold change and dispersion for RNA-seq data with DESeq2] by Love, Huber and Anders | |||

* [http://genomebiology.biomedcentral.com/articles/10.1186/gb-2010-11-10-r106 Differential expression analysis for sequence count data] for DESeq package from Anders & Huber | |||

<syntaxhighlight lang='rsplus'> | |||

source("http://bioconductor.org/biocLite.R"); biocLite("pasilla") | |||

directory <- system.file("extdata", package="pasilla", mustWork=TRUE) | |||

sampleFiles <- grep("treated",list.files(directory),value=TRUE) | |||

sampleCondition <- sub("(.*treated).*","\\1",sampleFiles) | |||

sampleTable <- data.frame(sampleName = sampleFiles, | |||

fileName = sampleFiles, | |||

condition = sampleCondition) | |||

ddsHTSeq <- DESeqDataSetFromHTSeqCount(sampleTable = sampleTable, | |||

directory = directory, | |||

design= ~ condition) | |||

dds <- ddsHTSeq[ rowSums(counts(ddsHTSeq)) > 1, ] | |||

dds <- estimateSizeFactors(dds) | |||

filteredlog = counts(dds, normalized=T) | |||

filteredlog0 = counts(dds, normalized=F) | |||

filteredlog0[1:5, 1:5] | |||

# treated1fb.txt treated2fb.txt treated3fb.txt untreated1fb.txt untreated2fb.txt | |||

# FBgn0000008:003 0 1 0 1 1 | |||

# FBgn0000008:004 1 0 1 0 1 | |||

# FBgn0000008:005 4 1 1 2 2 | |||

# FBgn0000008:007 18 8 7 8 11 | |||

# FBgn0000008:008 4 1 0 0 1 | |||

scale(filteredlog0[1:5, ], center=F, scale=sizeFactors(dds))[, 1:5] | |||

# treated1fb.txt treated2fb.txt treated3fb.txt untreated1fb.txt untreated2fb.txt | |||

# FBgn0000008:003 0.0000000 1.246732 0.000000 0.9712273 0.6178182 | |||

# FBgn0000008:004 0.6815046 0.000000 1.093313 0.0000000 0.6178182 | |||

# FBgn0000008:005 2.7260184 1.246732 1.093313 1.9424546 1.2356364 | |||

# FBgn0000008:007 12.2670828 9.973855 7.653188 7.7698183 6.7960001 | |||

# FBgn0000008:008 2.7260184 1.246732 0.000000 0.0000000 0.6178182 | |||

filteredlog[1:5, 1:5] | |||

# treated1fb.txt treated2fb.txt treated3fb.txt untreated1fb.txt untreated2fb.txt | |||

# FBgn0000008:003 0.0000000 1.246732 0.000000 0.9712273 0.6178182 | |||

# FBgn0000008:004 0.6815046 0.000000 1.093313 0.0000000 0.6178182 | |||

# FBgn0000008:005 2.7260184 1.246732 1.093313 1.9424546 1.2356364 | |||

# FBgn0000008:007 12.2670828 9.973855 7.653188 7.7698183 6.7960001 | |||

# FBgn0000008:008 2.7260184 1.246732 0.000000 0.0000000 0.6178182 | |||

</syntaxhighlight> | |||

== DESeq2 for DE analysis == | |||

<syntaxhighlight lang='rsplus'> | |||

> samplesDESeq = with(samples, data.frame( | > samplesDESeq = with(samples, data.frame( | ||

shortname = I(shortname), | shortname = I(shortname), | ||

| Line 976: | Line 1,024: | ||

# 2 FBgn0000008 88.526695 88.486694 88.580029 1.001055 0.001520941 0.9298627 1 | # 2 FBgn0000008 88.526695 88.486694 88.580029 1.001055 0.001520941 0.9298627 1 | ||

# 3 FBgn0000014 3.054418 2.327533 4.023599 1.728697 0.789684778 0.6839858 1 | # 3 FBgn0000014 3.054418 2.327533 4.023599 1.728697 0.789684778 0.6839858 1 | ||

</ | </syntaxhighlight> | ||

= File Format = | = File Format = | ||

Revision as of 16:30, 25 July 2016

The data is used in the paper "Count-based differential ....." by Anders et al 2013.

Required tools

The version number indicated below is the one I use. It may be updated when you are ready to download that.

- bowtie (bowtie2-2.1.0-linux-x86_64.zip): Mac, Linux, Windows binaries

- samtools (samtools-0.1.19.tar.bz2): require GCC to compile (build-essential) & zlib1g-dev & libncurses5-dev packages. wikipedia.

- tophat (tophat-2.0.10.Linux_x86_64.tar.gz): Linux and Mac binaries. Mailing list is on Tuxedo Tools Users Google Group.

- sra toolkit (sratoolkit.2.3.4-2-ubuntu64.tar.gz): Mac, Ubuntu & CentOS binaries

- HTseq (HTSeq-0.6.1.tar.gz): require Python 2

- IGV (IGV_2.3.26.zip): require Java and registration to download the program

- FastQC (fastqc_v0.10.1.zip): require Java (jdk is needed, jre is not enough)

- FastX (fastx_toolkit_0.0.13_binaries_Linux_2.6_amd64.tar.bz2): require libgtextutils-0.6

Note

- For alignment, STAR is another choice.

- For trimmer, trimmomatic is another choice.

Installation

If binary executables are available, we don't need to do anything special except adding the path containing the binary to the PATH variable.

If only source code is available, make sure the required tool GCC is installed (Under Ubuntu, we can use sudo apt-get install build-essential). Then we can compile and then install the program by using ./configure, make and make install.

In this exercise, all source code or binary programs were extracted to $HOME/binary/ directory (Use mkdir ~/binary to create this new directory) where each program has its own directory.

To use a shell script to install every thing, try the following.

cd; wget -N https://www.dropbox.com/s/ip1jiarxhq0dq91/install.sh; chmod +x install.sh; sudo ./install.sh; rm install.sh

Change PATH variable

Open ~/.basrhc file using any text editor (such as nano or vi) and add the path containing the binary of each program to PATH variable.

tail ~/.bashrc export PATH=$PATH:~/binary/bowtie2-2.1.0/ export PATH=$PATH:~/binary/tophat-2.0.10.Linux_x86_64/ export PATH=$PATH:~/binary/samtools-0.1.19/ export PATH=$PATH:~/binary/samtools-0.1.19/bcftools/ export PATH=$PATH:~/binary/samtools-0.1.19/misc/ export PATH=$PATH:~/binary/sratoolkit.2.3.4-2-ubuntu64/bin export PATH=$PATH:~/binary/HTSeq-0.6.1/build/scripts-2.7 export PATH=$PATH:~/binary/IGV_2.3.26/ export PATH=$PATH:~/binary/FastQC/ export PATH=$PATH:~/binary/fastx/bin

If we want to make these tools available for ALL users (ie system wide environment), we need to put these lines on /etc/profile file.

Data directory

Download <nprot.2013.099-S1.zip> from the paper's web site and extract it to ~/Anders2013 directory.

brb@brbweb4:~$ mkdir Anders2013 brb@brbweb4:~$ cd Anders2013 brb@brbweb4:~/Anders2013$ wget http://www.nature.com/nprot/journal/v8/n9/extref/nprot.2013.099-S1.zip brb@brbweb4:~/Anders2013$ unzip nprot.2013.099-S1.zip brb@brbweb4:~/Anders2013$ ls CG8144_RNAi-1.count counts.csv README.txt Untreated-1.count Untreated-6.count CG8144_RNAi-3.count __MACOSX samples.csv Untreated-3.count CG8144_RNAi-4.count nprot.2013.099-S1.zip SraRunInfo.csv Untreated-4.count

The raw data is from GSE18508 'modENCODE Drosophila RNA Binding Protein RNAi RNA-Seq Studies'.

Subdirectory

During running tophat program, several subdirectories will be generated. Each subdirectory will contain accepted_hits.bam, junctions.bed, insertions.bed, deletions.bed and other files/sub-subdirectories.

Once samtools program was run, .bam (and .bai) files will be created under Anders2013 directory.

Pipeline

The following summary is extracted from http://www.bioconductor.org/help/course-materials/2013/CSAMA2013/tuesday/afternoon/DESeq_protocol.pdf. It is the same as Anders 2013 paper but the paper requires an access permission.

It would be interesting to monitor the disk space usage before, during and after the execution.

Download example data (22 files in SRA format)

Download Supplement file and read <SraRunInfo.csv> in R. We will choose a subset of data based on "LibraryName" column.

sri = read.csv("SraRunInfo.csv", stringsAsFactors=FALSE)

keep = grep("CG8144|Untreated-",sri$LibraryName)

sri = sri[keep,]

fs = basename(sri$download_path)

for(i in 1:nrow(sri))

download.file(sri$download_path[i], fs[i])

names(sri)

[1] "Run" "ReleaseDate" "spots" "bases"

[5] "avgLength" "size_MB" "AssemblyName" "download_path"

[9] "Experiment" "LibraryName" "LibraryStrategy" "LibrarySelection"

[13] "LibrarySource" "LibraryLayout" "InsertSize" "Platform"

[17] "Model" "SRAStudy" "BioProject" "Study_Pubmed_id"

[21] "ProjectID" "Sample" "BioSample" "TaxID"

[25] "ScientificName" "SampleName" "Submission" "Consent"

apply(sri,2, function(x) length(unique(x)))

Run ReleaseDate spots bases

22 22 22 22

avgLength size_MB AssemblyName download_path

5 22 1 22

Experiment LibraryName LibraryStrategy LibrarySelection

7 7 1 1

LibrarySource LibraryLayout InsertSize Platform

1 2 2 1

Model SRAStudy BioProject Study_Pubmed_id

1 1 1 1

ProjectID Sample BioSample TaxID

1 7 1 1

ScientificName SampleName Submission Consent

1 1 1 1

sri[1:2,]

Run ReleaseDate spots bases avgLength size_MB

1 SRR031714 2010-01-15 10:42:00 5327425 394229450 74 396

2 SRR031715 2010-01-21 14:24:18 5248396 388381304 74 390

AssemblyName

1 NA

2 NA

download_path

1 ftp://ftp-private.ncbi.nlm.nih.gov/sra/sra-instant/reads/ByRun/sra/SRR/SRR031/SRR031714/SRR031714.sra

2 ftp://ftp-private.ncbi.nlm.nih.gov/sra/sra-instant/reads/ByRun/sra/SRR/SRR031/SRR031715/SRR031715.sra

Experiment LibraryName LibraryStrategy LibrarySelection LibrarySource

1 SRX014459 Untreated-3 RNA-Seq cDNA TRANSCRIPTOMIC

2 SRX014459 Untreated-3 RNA-Seq cDNA TRANSCRIPTOMIC

LibraryLayout InsertSize Platform Model SRAStudy

1 PAIRED 200 ILLUMINA Illumina Genome Analyzer II SRP001537

2 PAIRED 200 ILLUMINA Illumina Genome Analyzer II SRP001537

BioProject Study_Pubmed_id ProjectID Sample BioSample

1 GEO Series accession: GSE18508 20921232 168994 SRS008447 NA

2 GEO Series accession: GSE18508 20921232 168994 SRS008447 NA

TaxID ScientificName SampleName Submission Consent

1 7227 Drosophila melanogaster Drosophila melanogaster SRA010243 public

2 7227 Drosophila melanogaster Drosophila melanogaster SRA010243 public

Convert SRA to FASTQ (sra -> fastq)

This takes a long time and it is not to run in parallel by default. At the end of execution, there are 30 fastq files because 8 SRA file are pair end.

stopifnot( all(file.exists(fs)) ) # assure FTP download was successful

for(f in fs) {

cmd = paste("fastq-dump --split-3", f)

cat(cmd,"\n")

system(cmd) # invoke command

}

fastq-dump --split-3 SRR031714.sra

Read 5327425 spots for SRR031714.sra

Written 5327425 spots for SRR031714.sra

fastq-dump --split-3 SRR031715.sra

Read 5248396 spots for SRR031715.sra

Written 5248396 spots for SRR031715.sra

...

Note that '--split-3' option will splits mate-pair reads into separate files (First biological reads satisfying dumping conditions are placed in files *_1.fastq and *_2.fastq If only one biological read is present it is placed in *.fastq Biological reads and above are ignored). See this post.

brb@brbweb4:~/Anders2013$ ls -lh *.fastq -rw-r--r-- 1 brb brb 1.3G Feb 10 12:47 SRR031708.fastq -rw-r--r-- 1 brb brb 812M Feb 10 12:50 SRR031709.fastq -rw-r--r-- 1 brb brb 1.2G Feb 10 12:56 SRR031710.fastq -rw-r--r-- 1 brb brb 1.2G Feb 10 13:02 SRR031711.fastq -rw-r--r-- 1 brb brb 1.3G Feb 10 13:08 SRR031712.fastq -rw-r--r-- 1 brb brb 1.3G Feb 10 13:14 SRR031713.fastq -rw-r--r-- 1 brb brb 1.1G Feb 10 11:48 SRR031714_1.fastq -rw-r--r-- 1 brb brb 1.1G Feb 10 11:48 SRR031714_2.fastq -rw-r--r-- 1 brb brb 1.1G Feb 10 11:54 SRR031715_1.fastq -rw-r--r-- 1 brb brb 1.1G Feb 10 11:54 SRR031715_2.fastq -rw-r--r-- 1 brb brb 1.2G Feb 10 12:02 SRR031716_1.fastq -rw-r--r-- 1 brb brb 1.2G Feb 10 12:02 SRR031716_2.fastq -rw-r--r-- 1 brb brb 1.3G Feb 10 12:10 SRR031717_1.fastq -rw-r--r-- 1 brb brb 1.3G Feb 10 12:10 SRR031717_2.fastq -rw-r--r-- 1 brb brb 1.4G Feb 10 13:21 SRR031718.fastq -rw-r--r-- 1 brb brb 927M Feb 10 13:25 SRR031719.fastq -rw-r--r-- 1 brb brb 1.3G Feb 10 13:32 SRR031720.fastq -rw-r--r-- 1 brb brb 1.3G Feb 10 13:39 SRR031721.fastq -rw-r--r-- 1 brb brb 1.2G Feb 10 13:45 SRR031722.fastq -rw-r--r-- 1 brb brb 842M Feb 10 13:49 SRR031723.fastq -rw-r--r-- 1 brb brb 1.2G Feb 10 12:17 SRR031724_1.fastq -rw-r--r-- 1 brb brb 1.2G Feb 10 12:17 SRR031724_2.fastq -rw-r--r-- 1 brb brb 1.3G Feb 10 12:25 SRR031725_1.fastq -rw-r--r-- 1 brb brb 1.3G Feb 10 12:25 SRR031725_2.fastq -rw-r--r-- 1 brb brb 1.3G Feb 10 12:33 SRR031726_1.fastq -rw-r--r-- 1 brb brb 1.3G Feb 10 12:33 SRR031726_2.fastq -rw-r--r-- 1 brb brb 1.2G Feb 10 12:41 SRR031727_1.fastq -rw-r--r-- 1 brb brb 1.2G Feb 10 12:41 SRR031727_2.fastq -rw-r--r-- 1 brb brb 1.3G Feb 10 13:54 SRR031728.fastq -rw-r--r-- 1 brb brb 3.2G Feb 10 14:07 SRR031729.fastq

Access quality control through ShortRead or FastQC

library("ShortRead")

fqQC = qa(dirPath=".", pattern=".fastq$", type="fastq")

report(fqQC, type="html", dest="fastqQAreport")

Use a web browser to inspect the generated HTML file (here, stored in the \fastqQAreport" directory) with the quality-assessment report.

FastQC can be run either interactively or non-interactive. For example

/opt/RNA-Seq/bin/FastQC/fastqc ~/GSE11209/SRR002058.fastq --outdir=/home/brb/GSE11209/fastQC

will create a zip file and an unzipped directory under /home/brb/GSE11209/fastQC.

FastX_trimmer is a better choice. The syntax is

fastx_trimmer -f N -l N -i INPUT -o OUTPUT -v -Q33

The last parameter -Q33 is necessary for Illumina encoding quality score. If we omit it, we will get an error fastx_trimmer: Invalid quality score value (char '#' ord 35 quality value -29) on line 4

Read quality issues

http://training.bioinformatics.ucdavis.edu/docs/2012/05/RNA/qa-and-i.html

- Per base sequence quality (by position)

- Per sequence quality scores: see if a subset of sequences have universally low quality values.

- Per base sequence content

- Per sequence GC content

- Per base N content

- Sequence Length Distribution

- Sequence Duplication Levels

- Overpresented sequences

- Kmer Content

Download reference genome (for Tophat)

wget ftp://ftp.ensembl.org/pub/release-70/fasta/drosophila_melanogaster/dna/Drosophila_melanogaster.BDGP5.70.dna.toplevel.fa.gz gunzip Drosophila_melanogaster.BDGP5.70.dna.toplevel.fa.gz

Note that bowtie2/tophat2 indices for many commonly used reference genomes can be downloaded directly from https://ccb.jhu.edu/software/tophat/igenomes.shtml. Actually the link https://support.illumina.com/sequencing/sequencing_software/igenome.html from illumina is more complete.

For human species, it has different assemblies

- Ensembl - GRCh37 (equivalent to hg19)

- NCBI - GRCh38, build37.1, build37.2, build36.3 (equivalent to hg18)

- UCSC - hg38, hg19, hg18

| Source | |||

|---|---|---|---|

| Ensembl | GRCh37 | ||

| NCBI | GRCh38 | build37 | build36 |

| UCSC | hg38 | hg19 | hg18 |

Structure of downloaded reference genome from Tophat

Downloaded genome from tophat takes a long time (20GB for human). Using bowtie2-build program which can takes an enormous time to run.

$ tar xzvf Homo_sapiens_Ensembl_GRCh37.tar.gz

$ tree -L 4 Homo_sapiens

Homo_sapiens

└── Ensembl

└── GRCh37

├── Annotation

│ ├── Archives <==== gtf file

│ ├── Genes -> Archives/archive-current/Genes

│ ├── README.txt -> Archives/archive-current/README.txt

│ ├── SmallRNA -> Archives/archive-current/SmallRNA

│ └── Variation -> Archives/archive-current/Variation

├── GenomeStudio

│ ├── Archives

│ ├── Homo_sapiens -> Archives/archive-2012-03-09-04-49-46/Homo_sapiens

│ └── README.txt

└── Sequence

├── AbundantSequences

├── Bowtie2Index <==== bt2 files

├── BowtieIndex

├── BWAIndex

├── Chromosomes

├── Squashed-Homo_sapiens-Ensembl-GRCh37

└── WholeGenomeFasta <==== fa file

18 directories, 2 files

$ ls -l Homo_sapiens/Ensembl/GRCh37/Annotation/Archives/archive-2013-03-06-14-23-04/Genes

total 639136

-rwxrwxr-x 1 brb brb 302 Mar 18 2013 ChromInfo.txt

-rwxrwxr-x 1 brb brb 603096976 Mar 18 2013 genes.gtf

-rwxrwxr-x 1 brb brb 8236175 Mar 18 2013 refFlat.txt.gz

-rwxrwxr-x 1 brb brb 43130092 Mar 18 2013 refGene.txt

A list of files in <Homo_sapiens_UCSC_hg18.tar.gz> can be found on (File:Ucsc hg18.txt) or <Homo_sapiens_UCSC_hg19.tar.gz> can be found on github.

We don't need to extract the whole tarball. We just need to extract some files we need. But how do we know the locations of files we need? Use tar -tzvf XXX.tar.gz to find out.

$ tar -xzvf Homo_sapiens_UCSC_hg18.tar.gz Homo_sapiens/UCSC/hg18/Annotation/Archives/archive-2014-06-02-13-47-56/Genes/genes.gtf

Homo_sapiens/UCSC/hg18/Annotation/Archives/archive-2014-06-02-13-47-56/Genes/genes.gtf

$ tar -xzvf Homo_sapiens_UCSC_hg18.tar.gz Homo_sapiens/UCSC/hg18/Sequence/Bowtie2Index/

Homo_sapiens/UCSC/hg18/Sequence/Bowtie2Index/

Homo_sapiens/UCSC/hg18/Sequence/Bowtie2Index/genome.3.bt2

Homo_sapiens/UCSC/hg18/Sequence/Bowtie2Index/genome.1.bt2

Homo_sapiens/UCSC/hg18/Sequence/Bowtie2Index/genome.rev.2.bt2

Homo_sapiens/UCSC/hg18/Sequence/Bowtie2Index/genome.rev.1.bt2

Homo_sapiens/UCSC/hg18/Sequence/Bowtie2Index/genome.4.bt2

Homo_sapiens/UCSC/hg18/Sequence/Bowtie2Index/genome.2.bt2

Homo_sapiens/UCSC/hg18/Sequence/Bowtie2Index/genome.fa

$ tar -xzvf Homo_sapiens_UCSC_hg18.tar.gz Homo_sapiens/UCSC/hg18/Sequence/WholeGenomeFasta/genome.fa

Homo_sapiens/UCSC/hg18/Sequence/WholeGenomeFasta/genome.fa

$ tree -L 7 Homo_sapiens

Homo_sapiens

└── hg18

├── Annotation

│ └── Archives

│ └── archive-2014-06-02-13-47-56

│ └── Genes

│ └── genes.gtf <= gtf file

└── Sequence

├── Bowtie2Index

│ ├── genome.1.bt2 <= bt2 file

│ ├── genome.2.bt2

│ ├── genome.3.bt2

│ ├── genome.4.bt2

│ ├── genome.fa -> ../WholeGenomeFasta/genome.fa <= fake fa file (symbolic link)

│ ├── genome.rev.1.bt2

│ └── genome.rev.2.bt2

└── WholeGenomeFasta

└── genome.fa <= fa file

Download GTF (gene transfer format) file (for Tophat)

The compressed file is 77.6 MB size.

wget ftp://ftp.ensembl.org/pub/release-70/gtf/drosophila_melanogaster/Drosophila_melanogaster.BDGP5.70.gtf.gz gunzip Drosophila_melanogaster.BDGP5.70.gtf.gz

CRITICAL: Make sure that the gene annotation uses the same coordinate system as the reference FASTA le. Here, both les use BDGP5 (i. e., release 5 of the assembly provided by the Berkeley Drosophila Genome Project), as is apparent from the le names. To be on the safe side here, we recommend to always download the FASTA reference sequence and the GTF annotation data from the same resource provider.

- Ensembl.org provides both fasta and gtf files together. It's useful when we want to use Galaxy.

- Broad also provides a link to download gtf and fasta file.

Preprocess reference FASTA files into an index (fasta -> bt2)

Alternatively, pre-built indices for many commonly-used genomes are available from http://tophat.cbcb.umd.edu/igenomes.html <Drosophila_melanogaster_Ensembl_BDGP5.tar.gz> contains genome.1.bt2, genome.2.bt2, genome.3.bt2, genome.fa, genome.rev.1.bt2, and genome.rev.2.bt2 under Drosophila_melanogaster/Ensembl/BDGP5/Sequence/Bowtie2Index/ directory. This takes about 4 minutes 37 seconds on my Core-i7 Laptop computer running xubuntu 12.04.

When I try to run bowtie2-build on mouse genome, it is extremely slow. Unfortunately bowtie2-build does not have "-p" option like bowtie2 to speed it up.

bowtie2-build -f Drosophila_melanogaster.BDGP5.70.dna.toplevel.fa Dme1_BDGP5_70 brb@brbweb4:~/Anders2013$ ls -lt *.bt2 -rw-r--r-- 1 brb brb 58770281 Feb 10 12:06 Dme1_BDGP5_70.rev.1.bt2 -rw-r--r-- 1 brb brb 40591960 Feb 10 12:06 Dme1_BDGP5_70.rev.2.bt2 -rw-r--r-- 1 brb brb 58770281 Feb 10 12:04 Dme1_BDGP5_70.1.bt2 -rw-r--r-- 1 brb brb 40591960 Feb 10 12:04 Dme1_BDGP5_70.2.bt2 -rw-r--r-- 1 brb brb 339200 Feb 10 12:01 Dme1_BDGP5_70.3.bt2 -rw-r--r-- 1 brb brb 40591953 Feb 10 12:01 Dme1_BDGP5_70.4.bt2

Create a metadata table called "samples"

sri$LibraryName = gsub("S2_DRSC_","",sri$LibraryName) # trim label

samples = unique(sri[,c("LibraryName","LibraryLayout")])

for(i in seq_len(nrow(samples))) {

rw = (sri$LibraryName==samples$LibraryName[i])

if(samples$LibraryLayout[i]=="PAIRED") {

samples$fastq1[i] = paste0(sri$Run[rw],"_1.fastq",collapse=",")

samples$fastq2[i] = paste0(sri$Run[rw],"_2.fastq",collapse=",")

} else {

samples$fastq1[i] = paste0(sri$Run[rw],".fastq",collapse=",")

samples$fastq2[i] = ""

}

}

samples$condition = "CTL"

samples$condition[grep("RNAi",samples$LibraryName)] = "KD"

samples$shortname = paste( substr(samples$condition,1,2),

substr(samples$LibraryLayout,1,2), seq_len(nrow(samples)), sep=".")

samples

LibraryName LibraryLayout

1 Untreated-3 PAIRED

3 Untreated-4 PAIRED

5 CG8144_RNAi-3 PAIRED

7 CG8144_RNAi-4 PAIRED

144 Untreated-1 SINGLE

150 CG8144_RNAi-1 SINGLE

156 Untreated-6 SINGLE

fastq1

1 SRR031714_1.fastq,SRR031715_1.fastq

3 SRR031716_1.fastq,SRR031717_1.fastq

5 SRR031724_1.fastq,SRR031725_1.fastq

7 SRR031726_1.fastq,SRR031727_1.fastq

144 SRR031708.fastq,SRR031709.fastq,SRR031710.fastq,SRR031711.fastq,SRR031712.fastq,SRR031713.fastq

150 SRR031718.fastq,SRR031719.fastq,SRR031720.fastq,SRR031721.fastq,SRR031722.fastq,SRR031723.fastq

156 SRR031728.fastq,SRR031729.fastq

fastq2 condition shortname

1 SRR031714_2.fastq,SRR031715_2.fastq CTL CT.PA.1

3 SRR031716_2.fastq,SRR031717_2.fastq CTL CT.PA.2

5 SRR031724_2.fastq,SRR031725_2.fastq KD KD.PA.3

7 SRR031726_2.fastq,SRR031727_2.fastq KD KD.PA.4

144 CTL CT.SI.5

150 KD KD.SI.6

156 CTL CT.SI.7

On the other hand, the <samples.txt> file used in BRB-DGE looks like

$ cat samples.txt LibraryName LibraryLayout fastq1 fastq2 Untreated-3 PAIRED SRR031714_1.fastq,SRR031715_1.fastq SRR031714_2.fastq,SRR031715_2.fastq Untreated-4 PAIRED SRR031716_1.fastq,SRR031717_1.fastq SRR031716_2.fastq,SRR031717_2.fastq CG8144_RNAi-3 PAIRED SRR031724_1.fastq,SRR031725_1.fastq SRR031724_2.fastq,SRR031725_2.fastq CG8144_RNAi-4 PAIRED SRR031726_1.fastq,SRR031727_1.fastq SRR031726_2.fastq,SRR031727_2.fastq Untreated-1 SINGLE SRR031708.fastq,SRR031709.fastq,SRR031710.fastq,SRR031711.fastq,SRR031712.fastq,SRR031713.fastq CG8144_RNAi-1 SINGLE SRR031718.fastq,SRR031719.fastq,SRR031720.fastq,SRR031721.fastq,SRR031722.fastq,SRR031723.fastq Untreated-6 SINGLE SRR031728.fastq,SRR031729.fastq

Align the reads to the reference genome using tophat2 (fastq -> bam, bed)

Note:

- '-p' specifies the number of threads to use, -o specifies the output directory. The first name (bowind) is the name of the index (built in advance).

- It seems even the program cannot find the reference genome, it still runs.

- The run time is about 3 to 4 hours per sample when 7 jobs were running at the same time (5 threads for each sample).

- If only one sample was run it took 45 minutes as the paper said (5 threads). If I specify 1 thread, the running time is 1 hour 44 minutes.

- If I only run 2 jobs using batch, it takes 1 hour and 1 hour 2 mintues to finish these two jobs.

- The input is fastq files. The output is a directory contains 7 files (including *.bam) and 1 subdirectory of logs.

gf = "Drosophila_melanogaster.BDGP5.70.gtf"

bowind = "Dme1_BDGP5_70" # bt2 files

cmd = with(samples, paste("tophat2 -G", gf, "-p 5 -o", LibraryName,

bowind, fastq1, fastq2))

cmd # Actually we want to use system() to run each line of cmd.

[1] "tophat2 -G Drosophila_melanogaster.BDGP5.70.gtf -p 5 -o Untreated-3 Dme1_BDGP5_70 SRR031714_1.fastq,SRR031715_1.fastq SRR031714_2.fastq,SRR031715_2.fastq"

[2] "tophat2 -G Drosophila_melanogaster.BDGP5.70.gtf -p 5 -o Untreated-4 Dme1_BDGP5_70 SRR031716_1.fastq,SRR031717_1.fastq SRR031716_2.fastq,SRR031717_2.fastq"

[3] "tophat2 -G Drosophila_melanogaster.BDGP5.70.gtf -p 5 -o CG8144_RNAi-3 Dme1_BDGP5_70 SRR031724_1.fastq,SRR031725_1.fastq SRR031724_2.fastq,SRR031725_2.fastq"

[4] "tophat2 -G Drosophila_melanogaster.BDGP5.70.gtf -p 5 -o CG8144_RNAi-4 Dme1_BDGP5_70 SRR031726_1.fastq,SRR031727_1.fastq SRR031726_2.fastq,SRR031727_2.fastq"

[5] "tophat2 -G Drosophila_melanogaster.BDGP5.70.gtf -p 5 -o Untreated-1 Dme1_BDGP5_70 SRR031708.fastq,SRR031709.fastq,SRR031710.fastq,SRR031711.fastq,SRR031712.fastq,SRR031713.fastq "

[6] "tophat2 -G Drosophila_melanogaster.BDGP5.70.gtf -p 5 -o CG8144_RNAi-1 Dme1_BDGP5_70 SRR031718.fastq,SRR031719.fastq,SRR031720.fastq,SRR031721.fastq,SRR031722.fastq,SRR031723.fastq "

[7] "tophat2 -G Drosophila_melanogaster.BDGP5.70.gtf -p 5 -o Untreated-6 Dme1_BDGP5_70 SRR031728.fastq,SRR031729.fastq "

Tophat options

- --coverage-search/--no-coverage-search: According to this, it is a step to define possible junctions between exons. If you only want the expression profile of the annotated genes, you can skip this step for speed. The identification of new regions with the coverage is relevant only if you want to detect new splice sites in alternate transcripts or even new genes.

- -r/--mate-inner-dist <int>: This is the expected (mean) inner distance between mate pairs. For, example, for paired end runs with fragments selected at 300bp, where each end is 50bp, you should set -r to be 200. The default is 50bp. Check out this post about fragment size & inner size. This post discusses about the fragment size.

- -g/--max-multihits <int>: Instructs TopHat to allow up to this many alignments to the reference for a given read, and choose the alignments based on their alignment scores if there are more than this number. The default is 20 for read mapping.

- --library-type: The default is unstranded (fr-unstranded). If either fr-firststrand or fr-secondstrand is specified, every read alignment will have an XS attribute tag.

- --GTF option: Tophat now takes the approach of mapping the reads on the transcriptome first, with only the unmapped reads being further aligned to the whole genome and going through the novel junction discovery process like before. Please note that the values in the first column of the provided GTF/GFF file (column which indicates the chromosome or contig on which the feature is located), must match the name of the reference sequence in the Bowtie index you are using with TopHat.

- -T/--transcriptome-only: only align the reads to the transcriptome and report only those mappings as genomic mappings. Note that we still get junctions map.

- -x/--transcriptome-max-hits: Maximum number of mappings allowed for a read, when aligned to the transcriptome (any reads found with more then this number of mappings will be discarded).

Some helpful information:

Output files

$ ls -lh Untreated-6 total 2.2G -rw-rw-r-- 1 brb brb 2.0G May 6 18:11 accepted_hits.bam -rw-rw-r-- 1 brb brb 202 May 6 18:03 align_summary.txt -rw-rw-r-- 1 brb brb 2.0M May 6 18:03 deletions.bed -rw-rw-r-- 1 brb brb 612K May 6 18:03 insertions.bed -rw-rw-r-- 1 brb brb 2.7M May 6 18:03 junctions.bed drwxrwxr-x 2 brb brb 4.0K May 6 18:11 logs -rw-rw-r-- 1 brb brb 70 May 6 17:43 prep_reads.info -rw-rw-r-- 1 brb brb 197M May 6 18:12 unmapped.bam $ ls -lh Untreated-6/logs total 108K -rw-rw-r-- 1 brb brb 0 May 6 18:11 bam_merge_um.log -rw-rw-r-- 1 brb brb 12K May 6 17:59 bowtie_build.log -rw-rw-r-- 1 brb brb 0 May 6 17:39 bowtie_inspect_recons.log -rw-rw-r-- 1 brb brb 221 May 6 17:53 bowtie.left_kept_reads.log -rw-rw-r-- 1 brb brb 216 May 6 17:59 bowtie.left_kept_reads_seg1.log -rw-rw-r-- 1 brb brb 216 May 6 17:59 bowtie.left_kept_reads_seg2.log -rw-rw-r-- 1 brb brb 215 May 6 18:00 bowtie.left_kept_reads_seg3.log -rw-rw-r-- 1 brb brb 394 May 6 17:59 juncs_db.log -rw-rw-r-- 1 brb brb 300 May 6 18:00 long_spanning_reads.segs.log -rw-rw-r-- 1 brb brb 105 May 6 17:43 prep_reads.log -rw-rw-r-- 1 brb brb 617 May 6 18:03 reports.log -rw-rw-r-- 1 brb brb 0 May 6 18:07 reports.merge_bam.log -rw-rw-r-- 1 brb brb 40 May 6 18:05 reports.samtools_sort.log0 -rw-rw-r-- 1 brb brb 40 May 6 18:05 reports.samtools_sort.log1 -rw-rw-r-- 1 brb brb 40 May 6 18:04 reports.samtools_sort.log10 -rw-rw-r-- 1 brb brb 40 May 6 18:05 reports.samtools_sort.log2 -rw-rw-r-- 1 brb brb 40 May 6 18:04 reports.samtools_sort.log3 -rw-rw-r-- 1 brb brb 40 May 6 18:06 reports.samtools_sort.log4 -rw-rw-r-- 1 brb brb 40 May 6 18:06 reports.samtools_sort.log5 -rw-rw-r-- 1 brb brb 40 May 6 18:05 reports.samtools_sort.log6 -rw-rw-r-- 1 brb brb 40 May 6 18:05 reports.samtools_sort.log7 -rw-rw-r-- 1 brb brb 40 May 6 18:04 reports.samtools_sort.log8 -rw-rw-r-- 1 brb brb 40 May 6 18:04 reports.samtools_sort.log9 -rw-rw-r-- 1 brb brb 12K May 6 18:12 run.log -rw-rw-r-- 1 brb brb 813 May 6 17:58 segment_juncs.log -rw-rw-r-- 1 brb brb 2.0K May 6 18:12 tophat.log

Output from console

[2014-04-18 16:42:12] Beginning TopHat run (v2.0.10) ----------------------------------------------- [2014-04-18 16:42:12] Checking for Bowtie Bowtie version: 2.1.0.0 [2014-04-18 16:42:12] Checking for Samtools Samtools version: 0.1.19.0 [2014-04-18 16:42:12] Checking for Bowtie index files (genome).. [2014-04-18 16:42:12] Checking for reference FASTA file [2014-04-18 16:42:12] Generating SAM header for genome [2014-04-18 16:42:58] Reading known junctions from GTF file [2014-04-18 16:43:17] Preparing reads left reads: min. length=49, max. length=49, 26721504 kept reads (2587 discarded) [2014-04-18 16:47:40] Building transcriptome data files E2_Rep2/tmp/genes [2014-04-18 16:48:22] Building Bowtie index from genes.fa [2014-04-18 17:12:33] Mapping left_kept_reads to transcriptome genes with Bowtie2 [2014-04-18 17:44:09] Resuming TopHat pipeline with unmapped reads [2014-04-18 17:44:09] Mapping left_kept_reads.m2g_um to genome genome with Bowtie2 [2014-04-18 17:49:50] Mapping left_kept_reads.m2g_um_seg1 to genome genome with Bowtie2 (1/2) [2014-04-18 17:51:07] Mapping left_kept_reads.m2g_um_seg2 to genome genome with Bowtie2 (2/2) [2014-04-18 17:51:54] Searching for junctions via segment mapping Coverage-search algorithm is turned on, making this step very slow Please try running TopHat again with the option (--no-coverage-search) if this step takes too much time or memory. [2014-04-18 18:08:13] Retrieving sequences for splices [2014-04-18 18:10:10] Indexing splices [2014-04-18 18:19:15] Mapping left_kept_reads.m2g_um_seg1 to genome segment_juncs with Bowtie2 (1/2) [2014-04-18 18:21:26] Mapping left_kept_reads.m2g_um_seg2 to genome segment_juncs with Bowtie2 (2/2) [2014-04-18 18:23:38] Joining segment hits [2014-04-18 18:25:52] Reporting output tracks ----------------------------------------------- [2014-04-18 18:38:05] A summary of the alignment counts can be found in E2_Rep2/align_summary.txt [2014-04-18 18:38:05] Run complete: 01:55:52 elapsed

Some lessons

The following table summarizes the result of using '-G' and '--transcriptome-index' options. In conclusion, 3 possible ways of using these 2 options:

- Not using '-G'

- Using '-G' but no '--transcriptome-index'

- Run tophat2 one time to generate transcriptome index files and then run tophat2 again with '-G' and '--transcriptome-index' options.

| Example 1 (fail) | Wrong spec --transcriptome-index |

| Example 2 (fail) | Using -G & --transcriptome-index but my files (genes.gtf & genome.*) |

| Example 3 (OK) | No -G option |

| Example 4 (OK) | Proper use of using -G & --transcriptome-index (2 steps) |

| Example 5 (fail) | Using -G & --transcriptome-index using Illumina iGenomes dir |

| Example 6 (fail) | Like Example 5 but cp genes.gtf to genome.gtf |

| Example 7 (OK) | Using -G but no --transcriptome-index |

More details can be found on my github website.

To create the transcriptome index folder, it is not necessary to allocate too much memory or multithreads. The 'swarm' and 'jobload' commands show 0.3 GB memory and 1 thread is used even we allocate more memory and more CPUs. It took about 15 minutes to create the folder when the following code is used

tophat2 -p 2 \

-G "/fdb/igenomes/Homo_sapiens/UCSC/hg19/Annotation/Genes/genes.gtf" \

--transcriptome-index=transcriptome_data/known \

"/fdb/igenomes/Homo_sapiens/UCSC/hg19/Sequence/Bowtie2Index/genome"

This post discussed Tophat with and without GTF.

FAQs for Tophat

- TopHat2 on multiple samples, avoid building Bowtie index from genes.fa each time?

Is it necessary to run the following code by Tophat? See this post on seqanswers.com.

[2014-09-18 10:38:45] Building transcriptome data files /tmp/genes [2014-09-18 10:39:21] Building Bowtie index from genes.fa

For other users, which may encounter the same challenge - The trick is to run this command first:

tophat2 -G iGenomes/Homo_sapiens/UCSC/hg19/Annotation/Genes/genes.gtf \

--transcriptome-index=transcriptome_data/known \

iGenomes/Homo_sapiens/UCSC/hg19/Sequence/Bowtie2Index/genome

and then subsequently call tophat2 with this command:

tophat2 --num-threads 12 --transcriptome-index=transcriptome_data/known \

iGenomes/Homo_sapiens/UCSC/hg19/Sequence/Bowtie2Index/genome

myfastq_R1.fastq.gz myfastq_R2.fastq.gz

After running the above command, you'll see the following 1 line (instead of 2 lines as shown above)

[2014-09-18 12:12:04] Using pre-built transcriptome data..

Which is significantly faster, when running multiple samples.

The UCSC/hg19 data can retrieved like so:

wget ftp://igenome:[email protected]/Homo_sapiens/UCSC/hg19/Homo_sapiens_UCSC_hg19.tar.gz

Organize, sort and index the BAM files and create SAM files (bam -> sam)

Note

- Potentially we can run the commands in parallel. This step needs lots of disk I/O so it may be better not to run the command in parallel.

- Four steps:

- first step - Input: original aligned bam file. Output: <Untreated-3_sn.bam> (1.5 GB). sort alignment file by read name (-n option).

- Second step - Input: sorted bam file from 1st step. Output: <Untreated-3_sn.sam> (11 GB). BAM<->SAM conversion. The SAM file generated from these 2 steps will be used in HTSEQ-count (For paired-end data, the alignment have to be sorted either by read name or by alignment position; see htseq-count documentation).

- Third step - Input: original aligned bam file. Output: <Untreated-3_s.bam> (1.2 GB). sort alignment file by chromosomal coordinates.

- Fourth step - Input: sorted bam file from 3rd step. Output: <Untreated-3_s.bam.bai> (375 KB). index alignment. The SAM and BAI files generated from these 2 steps together with GTF file will be used in IGV (IGV requires that both SAM and BAM files be sorted by position and indexed; see IGV documentation).

- Examples how to use. See here

If we use samtools view to see the sorted bam files, we may find some fagments (first column) has only one read in this file and some fragments are not in this file.

If we look into the (sorted by name) unmapped.bam file, we can see the unmapped fragments.

for(i in seq_len(nrow(samples))) {

lib = samples$LibraryName[i]

ob = file.path(lib, "accepted_hits.bam")

# sort by name, convert to SAM for htseq-count

cat(paste0("samtools sort -n ",ob," ",lib,"_sn"),"\n")

cat(paste0("samtools view -o ",lib,"_sn.sam ",lib,"_sn.bam"),"\n")

# sort by position and index for IGV

cat(paste0("samtools sort ",ob," ",lib,"_s"),"\n")

cat(paste0("samtools index ",lib,"_s.bam"),"\n\n")

}

samtools sort -n Untreated-3/accepted_hits.bam Untreated-3_sn

samtools view -o Untreated-3_sn.sam Untreated-3_sn.bam

samtools sort Untreated-3/accepted_hits.bam Untreated-3_s

samtools index Untreated-3_s.bam

samtools sort -n Untreated-4/accepted_hits.bam Untreated-4_sn

samtools view -o Untreated-4_sn.sam Untreated-4_sn.bam

samtools sort Untreated-4/accepted_hits.bam Untreated-4_s

samtools index Untreated-4_s.bam

samtools sort -n CG8144_RNAi-3/accepted_hits.bam CG8144_RNAi-3_sn

samtools view -o CG8144_RNAi-3_sn.sam CG8144_RNAi-3_sn.bam

samtools sort CG8144_RNAi-3/accepted_hits.bam CG8144_RNAi-3_s

samtools index CG8144_RNAi-3_s.bam

samtools sort -n CG8144_RNAi-4/accepted_hits.bam CG8144_RNAi-4_sn

samtools view -o CG8144_RNAi-4_sn.sam CG8144_RNAi-4_sn.bam

samtools sort CG8144_RNAi-4/accepted_hits.bam CG8144_RNAi-4_s

samtools index CG8144_RNAi-4_s.bam

samtools sort -n Untreated-1/accepted_hits.bam Untreated-1_sn

samtools view -o Untreated-1_sn.sam Untreated-1_sn.bam

samtools sort Untreated-1/accepted_hits.bam Untreated-1_s

samtools index Untreated-1_s.bam

samtools sort -n CG8144_RNAi-1/accepted_hits.bam CG8144_RNAi-1_sn

samtools view -o CG8144_RNAi-1_sn.sam CG8144_RNAi-1_sn.bam

samtools sort CG8144_RNAi-1/accepted_hits.bam CG8144_RNAi-1_s

samtools index CG8144_RNAi-1_s.bam

samtools sort -n Untreated-6/accepted_hits.bam Untreated-6_sn

samtools view -o Untreated-6_sn.sam Untreated-6_sn.bam

samtools sort Untreated-6/accepted_hits.bam Untreated-6_s

samtools index Untreated-6_s.bam

Output from console

[bam_sort_core] merging from 15 files...

Check Coverage from bam file

Use samtools mpileup or Qualimap software as commented inbiostars.

The output of samtools mpileup has a pileup format. For example, in the GSE37918 dataset,

$ wc -l MDA-MB-231-control_mpileup.txt $ head -n 2 MDA-MB-231-control_mpileup.txt 1 12060 N 1 ^!C B 1 12061 N 1 T C

where each line consists of chromosome, 1-based coordinate, reference base, the number of reads covering the site, read bases and base qualities.

Inspect alignments with IGV

The Integrative Genomics Viewer (IGV) is a high-performance visualization tool for interactive exploration of large, integrated genomic datasets. It supports a wide variety of data types, including array-based and next-generation sequence data, and genomic annotations.

Launch

I can launch IGV in a terminal (suppose the file IGV_2.3.52.zip is extracted to the directory called binary under the HOME directory):

~/binary/IGV_2.3.52/igv.sh

Note that in addition to the bam file, we need to run samtools index XXX.bam to create the bai file before IGV can use it.

Command line options

bash igv.sh input.bam -g hg19 -b igv_batch_script.txt

And a sample <igv_batch_script.txt> file

new genome hg19 load input.bam snapshotDirectory /path/to/igv_screenshots goto chr19:59418052-59418053 sort base collapse snapshot chr19_59418052-59418053.png goto chr1:15680529-15680532 chr3:5680521-5680533 sort base collapse snapshot chr1_15680529-15680532.chr3_5680521-5680533.png exit

Genomes

IGV contains a lot of genomes. We can access them by using the drop-down list box and select 'More ...' entry. See Hosted Genomes for more information about the meaning of this list (some of them on the list do not appear on the website though).

To create .genome file, following the steps:

- Download reference genome fasta file (bt2 won't work)

wget ftp://ftp.ensembl.org/pub/release-70/fasta/drosophila_melanogaster/dna/Drosophila_melanogaster.BDGP5.70.dna.toplevel.fa.gz gunzip Drosophila_melanogaster.BDGP5.70.dna.toplevel.fa.gz

- Make sure gene model annotation file is available (*.gtf)

- If you have the cytoband file, IGV can display the chromosome ideogram. Otherwise, we won't see the ideogram. Unfortunately I don't see a download choice for the cytoband on the ensembl.org website. The UCSC website does provide the cytoband.txt file for downloading.

- In IGV, click Genomes > Create .genom File. Enter 'Anders2013' for both Unique identifer & Descriptive name. Browse the right FASTA file and Gene file. Click OK. We can save the file <Anders2013.genome> under the data directory.

If the above steps work, we should be able to prepare a sorted bam file with its index file to be used in IGV. For example,

samtools sort Untreated-6/accepted_hits.bam Untreated-6_s samtools index Untreated-6_s.bam

Splice junctions

The junctions track calls a splicing event when at least a single read splits across two exons in the alignment track.

IGV defines exons by your sample's read alignments.

Each splice junction is represented by an arc from the beginning to the end of the junction.

- Junctions from the + strand are colored red and extend above the center line.

- Junctions from the – strand are blue and extend below the center line.

The bed file format is specified in genome.ucsc.edu and a few lines of <junctions.bed> are given below.

brb@brb-T3500:~/Anders2013/Untreated-6$ head junctions.bed track name=junctions description="TopHat junctions" 2L 11095 11479 JUNC00000001 4 - 11095 11479 255,0,0 2 74,70 0,314 2L 11271 11479 JUNC00000002 24 - 11271 11479 255,0,0 2 73,70 0,138 2L 11447 11842 JUNC00000003 45 - 11447 11842 255,0,0 2 71,64 0,331

Small test data from Tophat (paired end)

The following screenshot was taken from another (small) dataset with two fq files (paired end). There are 100 sequences in the fq file. The reference genome has only 13 sequences. See also this post.

Before using IGV, we need to run Tophat and samtools (one is to get sorted by position in the reference in bam format, and the other is to get an index file in bai format). Both bam and bai files are binary. If we just use the bam file generated from Tophat in IGV, IGV will show a message 'An index file is required for SAM & BAM files'. See the BAM file requirement in IGV documentation.

wget http://ccb.jhu.edu/software/tophat/downloads/test_data.tar.gz tar -xzf test_data.tar.gz # Assume required software are installed; e.g. through BDGE. export bdge_bowtie_PATH=/opt/RNA-Seq/bin/bowtie2-2.2.1 export bdge_tophat_PATH=/opt/RNA-Seq/bin/tophat-2.0.11.Linux_x86_64 export bdge_samtools_PATH=/opt/RNA-Seq/bin/samtools-0.1.19:/opt/RNA-Seq/bin/samtools-0.1.19/bcftools:/opt/RNA-Seq/bin/samtools-0.1.19/misc export PATH=$bdge_bowtie_PATH:$bdge_samtools_PATH:$bdge_tophat_PATH:$PATH tophat -r 20 test_ref reads_1.fq reads_2.fq samtools sort tophat_out/accepted_hits.bam tophat_out_s # Output tophat_out_s.bam samtools index tophat_out_s.bam # Output tophat_out_s.bam.bai

The "-r" option in tophat is related to mate-inner-dist. See the discussion here.

- Genome -> Load genome from file -> test_ref.fa

- File -> load from file -> tophat_out_s.bam (read_1.fq won't work)

- We can change the color of alignment by read strand by using the right click menu on *.bam window.

When we launch IGV, it is better to increase the default memory size (2GB). To do that, open igv.sh file and change -Xmx2000m to -Xmx4000m, for example to increase the memory from 2GB to 4GB.

- The reference genome (*.fa file) is shown on the bottom of IGV.

- Mouse over a sequence will show "Read name" (we can go back to fastq file to double check the sequence), "Location" (the location from mouse over), "Alignment start" (the start position).

- If there is a mismatch from a bp, it will be shown in a different color (T-red, C-blue, A-green, G-brown).

- The histogram-like plot is called Coverage.

- One sequence may be splitted after alignment.

- The <align_summary.txt> file (see below for its content) shows the number of reads in input and number of mapped reads with percentage of mapped.

- There are 142 reads shown on the IGV (verified by <align_summary.txt>).

The <test_ref.fa> file (reference genome) is shown below. The total length is 50 * 13 = 650 bp. We can verify this by looking at both the reference genome (bottom) and bp scale (top).

>test_chromosome AAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAA ACTACTATCTGACTAGACTGGAGGCGCTTGCGACTGAGCTAGGACGTGCC ACTACGGGGATGACGACTAGGACTACGGACGGACTTAGAGCGTCAGATGC AGCGACTGGACTATTTAGGACGATCGGACTGAGGAGGGCAGTAGGACGCT ACGTATTTGGCGCGCGGCGCTACGGCTGAGCGTCGAGCTTGCGATACGCC GTAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAA AAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAG ACTATTACTTTATTATCTTACTCGGACGTAGACGGATCGGCAACGGGACT GTAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAA AAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAG TTTTCTACTTGAGACTGGGATCGAGGCGGACTTTTTAGGACGGGACTTGC AAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAA AAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAA

The <align_summary.txt> file is

Left reads:

Input : 100

Mapped : 72 (72.0% of input)

Right reads:

Input : 100

Mapped : 70 (70.0% of input)

71.0% overall read mapping rate.

Aligned pairs: 50

50.0% concordant pair alignment rate.

The first 2 reads (read length is 75) in <reads_1.fq> file looks like

@test_mRNA_150_290_0/1 TCCTAAAAAGTCCGCCTCGGTCTCAGTCTCAAGTAGAAAAAGTCCCGTTGGCGATCCGTCTACGTCCGAGTAAGA + IIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIII @test_mRNA_8_197_1/1 TCTGACTAGACTGGAGGCGCTTGCGACTGAGCTAGGACGTGACACTACGGGGATGGCGACTAGGACTACGGACGG + IIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIII

The first 2 reads in <reads_2.fq> file looks like

@test_mRNA_150_290_0/2 TACGTATTTGTCGCGCGGCCCTACGGCTGAGCGTCGAGCTTGCGATCCGCCACTATTACTTTATTATCTTACTCG + IIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIII @test_mRNA_8_197_1/2 GTATCGCAAGCTCGACGCTCAGCCGTAGGGCCGCGCGCCAAATACGTAGCGTCCTACTGCCCTCCTCAGTCCGAT + IIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIIII

The IGV guide here shows how to interpret the colors for alignment data. For example, read bases that match are displayed in gray.

IGV Channel is a youtube channel where it contains some videos about the IGV.

Paire end sequencing

- http://www.illumina.com/technology/next-generation-sequencing/paired-end-sequencing_assay.html

- https://www.biostars.org/p/104522/

- http://www-huber.embl.de/users/anders/HTSeq/doc/count.html

For paired end data, it is useful to use the right-click menu and select

- 'View as pairs' option. Pairs will be joined by a line.

- Color alignments by read strand.

The alignment of the right read works by 1) reverse the order and 2) apply compliment operator. For example, the following shows the reference sequence on the location of @test_mRNA_6_182_59/2. As we can see if we flip & apply the compliment of the sequence of @test_mRNA_6_182_59/2, they are almost matched except one base pair.

Reference GGACTATTTAGGACGATCGGACTGAGGAGGGCAGTAGGACGCTACGTATTTGGCGCGCGGCGCTACGGCTGAGCG @test_mRNA_6_182_59/2 CGCTCAGCCGTAGGGCCGCGCGCCAAATACGTAGCGTCCTACTGCCCTCCTCAGTCCGATCGTCCTAAATAGTCC

SRA000299 (single end)

This data was used in this post.

There are 4 libraries and 6 fastq files. The samples.txt is given below.

LibraryName LibraryLayout fastq1 fastq2 Kidney_0007_1.5pM SINGLE SRR002324.fastq Kidney_0007_3pM SINGLE SRR002320.fastq,SRR002325.fastq Liver_0007_1.5pM SINGLE SRR002322.fastq Liver_0007_3pM SINGLE SRR002321.fastq,SRR002323.fastq

Anders2013 (mix of single & paired end)

This is the data used in this wiki page. The sample meta data can be found at here.

Here we focus on a single-end library Untreated-6 which contains 2 fastq files (SRR031728.fastq, SRR031729.fastq).

- In IGV, select the chromosome 2RHet and zoom in to the region of 1,638,560 bp. We are using the ref genome from ensembl.org as Anders2013 paper has used. Note the bp location number always starts at 1 for each chromosome. Here I am focusing on the read SRR031729.8202948 which has a color of a negative strand even the data is single end.

# Find the read sequence. First we find the line number using '-n' option of the 'grep' command brb@brb-T3500:~/Anders2013$ grep -n 8202948 SRR031729.fastq 32811789:@SRR031729.8202948 HWI-EAS299:3:65:192:596 length=75 32811791:+SRR031729.8202948 HWI-EAS299:3:65:192:596 length=75 # Next we display the text from line 32811789 to 32811790 brb@brb-T3500:~/Anders2013$ sed -n '32811789,32811790p' SRR031729.fastq @SRR031729.8202948 HWI-EAS299:3:65:192:596 length=75 CTTATCCTTTCTCTCTTGTATTTCCTGTGGAGGAAATTGACCTCAACCCATGGACTACCGAAACCTGGCAATATC

- Using an online DNA tool we can get the complement and inverse of the sequence (we only need to do this extra step for a negative strand.

GATATTGCCAGGTTTCGGTAGTCCATGGGTTGAGGTCAATTTCCTCCACAGGAAATACAAGAGAGAAAGGATAAG

- The reference genome shows the read starts at 2RHet:1638559, Cigar 75M. I write down the sequence by selecting 'Show all bases' in right-click menu. Not that the alignment is 100% for this read.

GATATTGCCAGGTTTCGGTAGTCCATGGGTTGAGGTCAATTTCCTCCACAGGAAATACAAGAGAGAAAGGATAAG

The ref genome seq matched with the complement & reverse seq of the (negative strand; shown in a purple color) read. This verifies the plot.

IGB

It is also a java-based software. Java version 1.8 is required.

- It provides similar functions as IGV.

- The memory handling is not as good as IGV (tested using Anders2013 data with the same 4000M setting).

- There are several tutorial videos for IGB and the user guide is good.

Count reads using htseq-count (sam -> count)

samples$countf = paste(samples$LibraryName, "count", sep=".")

gf = "Drosophila_melanogaster.BDGP5.70.gtf"

cmd = paste0("htseq-count -s no -a 10 ",

samples$LibraryName, "_sn.sam ",

gf," > ", samples$countf)

cmd

[1] "htseq-count -s no -a 10 Untreated-3_sn.sam Drosophila_melanogaster.BDGP5.70.gtf > Untreated-3.count"

[2] "htseq-count -s no -a 10 Untreated-4_sn.sam Drosophila_melanogaster.BDGP5.70.gtf > Untreated-4.count"

[3] "htseq-count -s no -a 10 CG8144_RNAi-3_sn.sam Drosophila_melanogaster.BDGP5.70.gtf > CG8144_RNAi-3.count"

[4] "htseq-count -s no -a 10 CG8144_RNAi-4_sn.sam Drosophila_melanogaster.BDGP5.70.gtf > CG8144_RNAi-4.count"

[5] "htseq-count -s no -a 10 Untreated-1_sn.sam Drosophila_melanogaster.BDGP5.70.gtf > Untreated-1.count"

[6] "htseq-count -s no -a 10 CG8144_RNAi-1_sn.sam Drosophila_melanogaster.BDGP5.70.gtf > CG8144_RNAi-1.count"

[7] "htseq-count -s no -a 10 Untreated-6_sn.sam Drosophila_melanogaster.BDGP5.70.gtf > Untreated-6.count"

Output from console

Lots of output

.... 37200000 SAM alignment records processed. 37300000 SAM alignment records processed. 37399340 SAM alignments processed.

Performance

http://seqanswers.com/forums/showthread.php?t=18920 htseq-count was designed to use very little memory] (0.1GB from my observation). But the price is it is single thread and may takes several several hours. Note: Another tool is CuffLinks. See Sean Davis's tutorial.

[http://www.genefriends.org/ReadCounter ReadCounter= - htseq alternative

- it's multi threaded

- can produce the same result as htseq

- java based

DESeq2 normalization

- https://support.bioconductor.org/p/66067/

- https://github.com/mgonzalezporta/TeachingMaterial/blob/master/doc/25.normalising.md

- http://www.sthda.com/english/wiki/rna-sequencing-data-analysis-counting-normalization-and-differential-expression

- Moderated estimation of fold change and dispersion for RNA-seq data with DESeq2 by Love, Huber and Anders

- Differential expression analysis for sequence count data for DESeq package from Anders & Huber

source("http://bioconductor.org/biocLite.R"); biocLite("pasilla")

directory <- system.file("extdata", package="pasilla", mustWork=TRUE)

sampleFiles <- grep("treated",list.files(directory),value=TRUE)

sampleCondition <- sub("(.*treated).*","\\1",sampleFiles)

sampleTable <- data.frame(sampleName = sampleFiles,

fileName = sampleFiles,

condition = sampleCondition)

ddsHTSeq <- DESeqDataSetFromHTSeqCount(sampleTable = sampleTable,

directory = directory,

design= ~ condition)

dds <- ddsHTSeq[ rowSums(counts(ddsHTSeq)) > 1, ]

dds <- estimateSizeFactors(dds)

filteredlog = counts(dds, normalized=T)

filteredlog0 = counts(dds, normalized=F)

filteredlog0[1:5, 1:5]

# treated1fb.txt treated2fb.txt treated3fb.txt untreated1fb.txt untreated2fb.txt

# FBgn0000008:003 0 1 0 1 1

# FBgn0000008:004 1 0 1 0 1

# FBgn0000008:005 4 1 1 2 2

# FBgn0000008:007 18 8 7 8 11

# FBgn0000008:008 4 1 0 0 1

scale(filteredlog0[1:5, ], center=F, scale=sizeFactors(dds))[, 1:5]

# treated1fb.txt treated2fb.txt treated3fb.txt untreated1fb.txt untreated2fb.txt

# FBgn0000008:003 0.0000000 1.246732 0.000000 0.9712273 0.6178182

# FBgn0000008:004 0.6815046 0.000000 1.093313 0.0000000 0.6178182

# FBgn0000008:005 2.7260184 1.246732 1.093313 1.9424546 1.2356364

# FBgn0000008:007 12.2670828 9.973855 7.653188 7.7698183 6.7960001

# FBgn0000008:008 2.7260184 1.246732 0.000000 0.0000000 0.6178182

filteredlog[1:5, 1:5]

# treated1fb.txt treated2fb.txt treated3fb.txt untreated1fb.txt untreated2fb.txt

# FBgn0000008:003 0.0000000 1.246732 0.000000 0.9712273 0.6178182

# FBgn0000008:004 0.6815046 0.000000 1.093313 0.0000000 0.6178182

# FBgn0000008:005 2.7260184 1.246732 1.093313 1.9424546 1.2356364

# FBgn0000008:007 12.2670828 9.973855 7.653188 7.7698183 6.7960001

# FBgn0000008:008 2.7260184 1.246732 0.000000 0.0000000 0.6178182

DESeq2 for DE analysis

> samplesDESeq = with(samples, data.frame(

shortname = I(shortname),

countf = I(countf),

condition = condition,

LibraryLayout = LibraryLayout))

> samplesDESeq

shortname countf condition LibraryLayout

1 CT.PA.1 Untreated-3.count CTL PAIRED

2 CT.PA.2 Untreated-4.count CTL PAIRED

3 KD.PA.3 CG8144_RNAi-3.count KD PAIRED

4 KD.PA.4 CG8144_RNAi-4.count KD PAIRED

5 CT.SI.5 Untreated-1.count CTL SINGLE

6 KD.SI.6 CG8144_RNAi-1.count KD SINGLE

7 CT.SI.7 Untreated-6.count CTL SINGLE

> library("DESeq")

cds <- newCountDataSetFromHTSeqCount(samplesDESeq) # quick, 15682 features, 7 samples

cds <- estimateSizeFactors(cds) # quick

sizeFactors(cds)

# CT.PA.1 CT.PA.2 KD.PA.3 KD.PA.4 CT.SI.5 KD.SI.6 CT.SI.7

# 0.6991612 0.8104921 0.8217403 0.8941097 1.6431467 1.3720872 1.1041186

cds <- estimateDispersions(cds) # quick

res <- nbinomTest(cds,"CTL","KD") # 44 seconds

sum(res$pval <= .05, na.rm=T) # [1] 1574

sum(res$padj <= .05, na.rm=T) # [1] 730

options(width=100)

res[1:3,]

# id baseMean baseMeanA baseMeanB foldChange log2FoldChange pval padj

# 1 FBgn0000003 0.000000 0.000000 0.000000 NaN NaN NA NA

# 2 FBgn0000008 88.526695 88.486694 88.580029 1.001055 0.001520941 0.9298627 1

# 3 FBgn0000014 3.054418 2.327533 4.023599 1.728697 0.789684778 0.6839858 1

File Format

- http://genome.ucsc.edu/FAQ/FAQformat.html which includes a lot of common formats like BAM, BED, bedGraph, bigBed, bigWig, VCF, WIG, et al.

- http://stephenturner.us/biofiletypes/

fastq

The wikipedia website provides information to convert FASTQ to FASTA format (when do we want to do that?).

fasta

FASTQ files provide more information than FASTA. This perl program allows to convert FASTA format to FASTQ format while assuming quality score of 40.

The reference genome is saved in FASTA format.

For the fruit fly genome file Drosophila_melanogaster.BDGP5.70.dna.toplevel.fa. The grep -n command can be used to get all chromosome names.

$ head Drosophila_melanogaster.BDGP5.70.dna.toplevel.fa >2L dna:chromosome chromosome:BDGP5:2L:1:23011544:1 REF CGACAATGCACGACAGAGGAAGCAGAACAGATATTTAGATTGCCTCTCATTTTCTCTCCC ATATTATAGGGAGAAATATGATCGCGTATGCGAGAGTAGTGCCAACATATTGTGCTCTTT GATTTTTTGGCAACCCAAAATGGTGGCGGATGAACGAGATGATAATATATTCAAGTTGCC GCTAATCAGAAATAAATTCATTGCAACGTTAAATACAGCACAATATATGATCGCGTATGC GAGAGTAGTGCCAACATATTGTGCTAATGAGTGCCTCTCGTTCTCTGTCTTATATTACCG CAAACCCAAAAAGACAATACACGACAGAGAGAGAGAGCAGCGGAGATATTTAGATTGCCT ATTAAATATGATCGCGTATGCGAGAGTAGTGCCAACATATTGTGCTCTCTATATAATGAC TGCCTCTCATTCTGTCTTATTTTACCGCAAACCCAAATCGACAATGCACGACAGAGGAAG CAGAACAGATATTTAGATTGCCTCTCATTTTCTCTCCCATATTATAGGGAGAAATATGAT $ grep -n ">" Drosophila_melanogaster.BDGP5.70.dna.toplevel.fa 1:>2L dna:chromosome chromosome:BDGP5:2L:1:23011544:1 REF 383528:>2LHet dna:chromosome chromosome:BDGP5:2LHet:1:368872:1 REF 389677:>2R dna:chromosome chromosome:BDGP5:2R:1:21146708:1 REF 742124:>2RHet dna:chromosome chromosome:BDGP5:2RHet:1:3288761:1 REF 796938:>3L dna:chromosome chromosome:BDGP5:3L:1:24543557:1 REF 1205999:>3LHet dna:chromosome chromosome:BDGP5:3LHet:1:2555491:1 REF 1248592:>3R dna:chromosome chromosome:BDGP5:3R:1:27905053:1 REF 1713678:>3RHet dna:chromosome chromosome:BDGP5:3RHet:1:2517507:1 REF 1755638:>4 dna:chromosome chromosome:BDGP5:4:1:1351857:1 REF 1778170:>U dna:chromosome chromosome:BDGP5:U:1:10049037:1 REF 1945655:>Uextra dna:chromosome chromosome:BDGP5:Uextra:1:29004656:1 REF 2429067:>X dna:chromosome chromosome:BDGP5:X:1:22422827:1 REF 2802782:>XHet dna:chromosome chromosome:BDGP5:XHet:1:204112:1 REF 2806185:>YHet dna:chromosome chromosome:BDGP5:YHet:1:347038:1 REF 2811970:>dmel_mitochondrion_genome dna:chromosome chromosome:BDGP5:dmel_mitochondrion_genome:1:19517:1 REF $ wc -l *.fa 2812296 Drosophila_melanogaster.BDGP5.70.dna.toplevel.fa

chromosomes

When I apply the above method to examine the genome.fa files from Ensembl, NCBI and UCSC using the command

grep -n ">" genome.fa | cut -d' ' -f1 | cut -d '>' -f2 # for example grep -n ">" ~/Downloads/Homo_sapiens.GRCh38.dna.chromosome.22.fa | cut -d' ' -f1 | cut -d '>' -f2 # 22

I get the following result

- Ensembl:

- GRCh37: 1, 2, 3, ...., X, Y, MT

- GRCh38: 1, 2, 3, ... (see note below)

- NCBI:

- build37.1, build37.2: 1, 2, 3, ....

- GRCh38: chr1, chr2, ...., chr22, chrX, chrY, chrM, chr1_KI270706v1_random, ....., chrEBV

- UCSC:

- hg18, hg19, hg38: chr1, chr2, ...., chr22, chrX, chrY, chrM

Note that

- Neither Illumina or Biowulf provide GRCh38 from Ensembl (maybe for a good reason). Ensembl (http://ftp.ensembl.org/pub/release-76/fasta/homo_sapiens/dna) does provide GRCh38.

- GRCh38 from Ensembl is still following its GRCh37 convention; it won't include 'chr' on their chromosome names. That is, even the same name GRCh38 was used in both Ensembl and NCBI, they have different conventions.

gff/gtf

- The GTF file can be downloaded from the Tophat website. After that, extract the file genes.gtf according to the instruction in BDGE website.

- GTF file can also be downloaded from

- UCSC Genome Bioinformatics or UCSC Table Browser. See the Mapping reads to the genome tutorial in homer.salk.edu.

- NCBI

- Ensembl

- The meaning of Gene isoform in wikipedia. It contains links to TSS, CDS, UTR.

- The meaning of transcriptome in wikipedia. Each row in a GTF file contains transcript id and gene name. See gist.

- Gene name in GTF file

$ grep KRAS ~/igenome/human/UCSC/hg19/genes.gtf | wc -l 23

- What is CDS in GTF file? CDS (contiguous sequence) CDS is the sequence that actually makes proteins. See biostars.org.

- What is exon? A region of the transcript sequence within a gene which is not removed from the primary RNA transcript by RNA splicing. See its definition on

- GFF3 format from sequenceontology.org

The following output shows how many features from different sources.

brb@brb-T3500:/tmp$ wc -l ~/igenome/human/UCSC/hg19/genome.fa

61913917 /home/brb/igenome/human/UCSC/hg19/genome.fa

brb@brb-T3500:/tmp$ wc -l ~/igenome/human/NCBI/build37.2/genome.fa

44224234 /home/brb/igenome/human/NCBI/build37.2/genome.fa

brb@brb-T3500:/tmp$ wc -l ~/igenome/human/Ensembl/GRCh37/genome.fa

51594937 /home/brb/igenome/human/Ensembl/GRCh37/genome.fa

brb@brb-T3500:~/igenome/human$ wc -l UCSC/hg19/genes.gtf

869204 UCSC/hg19/genes.gtf

brb@brb-T3500:~/igenome/human$ wc -l NCBI/build37.2/genes.gtf

819119 NCBI/build37.2/genes.gtf

brb@brb-T3500:~/igenome/human$ wc -l Ensembl/GRCh37/genes.gtf

2280612 Ensembl/GRCh37/genes.gtf

brb@brb-T3500:~/igenome/human$ R

> x <- read.delim("~/igenome/human/Ensembl/GRCh37/genes.gtf", header=FALSE, as.is=TRUE)

> dim(x)

[1] 2280612 9

> x[1:2, ]

V1 V2 V3 V4 V5 V6 V7 V8

1 1 processed_transcript exon 11869 12227 . + .

2 1 unprocessed_pseudogene exon 11872 12227 . + .

V9

1 exon_id ENSE00002234944; exon_number 1; gene_biotype pseudogene; gene_id ENSG00000223972; gene_name DDX11L1; transcript_id ENST00000456328; transcript_name DDX11L1-002; tss_id TSS15000;

2 exon_id ENSE00002234632; exon_number 1; gene_biotype pseudogene; gene_id ENSG00000223972; gene_name DDX11L1; transcript_id ENST00000515242; transcript_name DDX11L1-201; tss_id TSS190873;

> table(x[, 3])

CDS exon start_codon stop_codon

794920 1309155 92839 83698

> y <- read.delim("~/igenome/human/NCBI/build37.2/genes.gtf", header=FALSE, as.is=TRUE)

> table(y[, 3])

CDS exon start_codon stop_codon

345395 405671 34041 34012

> z <- read.delim("~/igenome/human/UCSC/hg19/genes.gtf", header=FALSE, as.is=TRUE)

> table(z[, 3])

CDS exon start_codon stop_codon

365947 430178 36547 36532

> table(x[, 2])

3prime_overlapping_ncrna ambiguous_orf

79 186

antisense IG_C_gene

24437 135

IG_C_pseudogene IG_D_gene

16 56

IG_J_gene IG_J_pseudogene

41 3

IG_V_gene IG_V_pseudogene

649 232

lincRNA miRNA

28635 3401

misc_RNA Mt_rRNA

2187 2

Mt_tRNA non_coding

22 71

nonsense_mediated_decay non_stop_decay

196359 808

polymorphic_pseudogene processed_pseudogene

1453 12548

processed_transcript protein_coding

159015 1703902

pseudogene retained_intron

1582 118186

rRNA sense_intronic

566 1535

sense_overlapping snoRNA

340 1613

snRNA TEC

2066 124

transcribed_processed_pseudogene transcribed_unprocessed_pseudogene

483 4160

TR_C_gene TR_D_gene

50 6

TR_J_gene TR_J_pseudogene

167 4

TR_V_gene TR_V_pseudogene

752 67

unitary_pseudogene unprocessed_pseudogene

1234 13440

> table(y[, 2])

unknown

819119

> table(z[, 2])

unknown

869204

The following table gives an example (UCSC/hg19) from one feature. Notice that the last column contains transcript ID, tss ID and gene ID. If the GTF is obtained from Ensembl, this column also has exon ID.

| 1 | seqname | chr1 (called 1 from NCBI & Ensembl) |

| 2 | source | unknown |

| 3 | feature | exon/transcript |

| 4 | start | 11874 |

| 5 | end | 12227 |

| 6 | score | . |

| 7 | strand | + |

| 8 | frame | 0 |

| 9 | attribute | gene_id "DDX11L1"; gene_name "DDX11L1"; transcript_id "NR_046018"; tss_id "TSS14844"; |

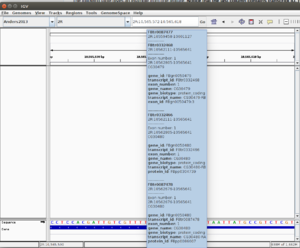

The gene attributes (gene_id, transcript_id, exon_number, gene_name, gene_biotype, transcript_name, protein_id) appears on the mouse-over pop-up in IGV. See the 1st screenshot below from fly genome (imported manually by myself to IGV). From the 1st screenshot it is clear that one position may contain more than one transcripts.

This post on seqanswers.com has a discussion about the GTF file, exon, intron, CDS, exon, etc. It says

"Exon" refers to transcription and "CDS" to translation. These are two different biological mechanisms.

bed

A BED file (.bed) is a tab-delimited text file that defines a feature track. Bed files can be like GTF/GFF to provide gene annotations. See File Formats section from broadinstitute.org or ensembl.org. Tracks in the UCSC Genome Browser (http://genome.ucsc.edu/) can be downloaded to BED files and loaded into IGV.

A simple bed file looks like

chr7 127471196 127472363

We can also take a look some bed files generated after running the Tophat program.

brb@brb-T3500:~/Anders2013/Untreated-6$ head -n 3 insertions.bed track name=insertions description="TopHat insertions" 2L 10457 10457 T 1 2L 10524 10524 T 1 brb@brb-T3500:~/Anders2013/Untreated-6$ head -n 3 deletions.bed track name=deletions description="TopHat deletions" 2L 10832 10833 - 1 2L 12432 12434 - 1 brb@brb-T3500:~/Anders2013/Untreated-6$ head -n 3 junctions.bed track name=junctions description="TopHat junctions" 2L 11095 11479 JUNC00000001 4 - 11095 11479 255,0,0 2 74 ,70 0,314 2L 11271 11479 JUNC00000002 24 - 11271 11479 255,0,0 2 73 ,70 0,138

From my observations, the IGV can create the junctions track (it is called 'Untreated-6_s.bam junctions' when I load the <Untreated-6_s.bam> file) automatically once we load the bam file. If we manually load the <junctions.bed> file saved in the 'Untreated-6' directory, we can see both junction tracks are the same.

sam/bam, "samtools view" and Rsamtools

- Genome-SAMtools

- http://samtools.github.io/hts-specs/SAMv1.pdf

- http://genome.sph.umich.edu/wiki/SAM

- https://class.coursera.org/gencommand-003/lecture/39 (video)

There are 11 required fields in the sam file and the header is optional. See section 1.4 The alignment section: mandatory fields on p4 of the pdf file.

We can use samtools view to view the bam file.

samtools view accepted_hits.bam | head -1000 | less

We can also use Bioconductor's Rsamtools package to manipulate sam/bam, fasta, bcf and tabix files.

source("https://bioconductor.org/biocLite.R")

biocLite("Rsamtools")

library(Rsamtools)

bf1 = BamFileList(file = c("file1.bam", "file2.bam"))

seqinfo(bf1)

chromosomes

We can use the shell command in this post to extract the chromosome name, start position from the bam file. There we can see the difference of the chromosome name of bam files created from using Ensembl and UCSC.

# Ensembl GRCh37

brb@T3600 ~/SeqTestdata/RNASeqFibroblast $ samtools view LFB_scramble_repA/accepted_hits.bam | head | awk '{print $3}'

1

1

1

1

1

# NCBI GRCh38

brb@T3600 ~/SeqTestdata/RNASeqFibroblast $ samtools view RNAseq_Bam_ncbigrch38/LFB_scramble_repA.bam | head | awk '{print $3}'

chr1

chr1

chr1

chr1

chr1

chr1

# UCSC hg38

brb@T3600 ~/SeqTestdata/RNASeqFibroblast $ samtools view RNAseq_Bam_hg38/LFB_scramble_repA.bam | head | awk '{print $3}'

chr1

chr1

chr1

chr1

chr1

chr1

What's special with rna-seq count data

Overdispersion

- http://genomicsclass.github.io/book/pages/rnaseq_gene_level.html

- Dispersion Estimation and Its Effect on Test Performance in RNA-seq Data Analysis: A Simulation-Based Comparison of Methods by Landau 2013.

Normalization

- The Impact of Normalization Methods on RNA-Seq Data Analysis by Zyprych-Walczak 2015. Two public datasets (Bodymap and Cheung) are available from recount website.

- Evaluation of statistical methods for normalization and differential expression in mRNA-Seq experiments by Bullard 2010.

- Comparing the normalization methods for the differential analysis of Illumina high-throughput RNA-Seq data by Peipei Li 2015.

Other RNA-Seq data

GEO

Go to http://www.ncbi.nlm.nih.gov/sites/entrez and select 'GEO Datasets' from the drop down menu, and use 'RNA-Seq' as your search term. http://www.ncbi.nlm.nih.gov/gds?term=rna-seq

GSE28666

as used in DAFS paper. The timing is

- bowtie2-build 115 minutes

- fastq-dump 25 minutes per fastq (3.3 - 5.9GB fastq files)

- tophat2 90 minutes per fastq

- samtools 5 minutes

- htseq-count 13 minutes

GSE51403 (full R script)

RNA-seq differential expression studies: more sequence, or more replication?

An example of R code to download SRA files.

homo sapiens,

Illumina HiSeq 2000.

Question: what reference genome I should use?

Ans1: hg18 was used.

See http://www.ncbi.nlm.nih.gov/geo/query/acc.cgi?acc=GSM1244821

Or the paper.

Ans2: GRCh38:

Click 'BioProject' in http://www.ncbi.nlm.nih.gov/geo/query/acc.cgi?acc=GSE51403

then click 'Genome' in http://www.ncbi.nlm.nih.gov/bioproject/PRJNA222975

But tophat website only provides GRCh37.

https://genome.ucsc.edu/goldenPath/newsarch.html shows GRCh38 assembly is released

in Dec/2013.

38 fastq/SRR (all are single lane)

14 samples/GSM

GSM# SRX# Sample SRR#

---------------------------------------------------------------

Ctrl 1244822 365217 Ctrl_Rep7 1012952, 1012953, 1012954 (3 runs, 70M spots, 3.5G bases)

1244821 365216 Ctrl_Rep6 949-951 (I just skip prefix '1012')

1244820 365215 Ctrl_Rep5 946-948 (3 runs)

1244819 365214 Ctrl_Rep4 943-945 (3 runs) Control ethanol 24h

1244818 365213 Ctrl_Rep3 940-942 (3 runs)

1244817 365212 Ctrl_Rep2 937-939 (3 runs)

1244816 365211 Ctrl_Rep1 934-936 (3 runs) Control ethanol 24h

Trmnt 1244815 365210 E2_Rep7 931-933 (3 runs) 10nM E2 treatment for 24h

1244814 365209 E2_Rep6 928-930 (3 runs)

1244813 365208 E2_Rep5 925-927 (3 runs)

1244812 365207 E2_Rep4 923-924 (2 runs)

1244811 365206 E2_Rep3 921-922 (2 runs)

1244810 365205 E2_Rep2 919-920 (2 runs) 10nM E2 treatment for 24h

1244809 365204 E2_Rep1 917-918 (2 runs) 10nM E2 treatment for 24h

# Step 1. Download and convert data

library(SRAdb)

setwd("~/GSE51403")

if( ! file.exists('SRAmetadb.sqlite') ) {

# sqlfile <- getSRAdbFile()

sqlfile <- "~/Anders2013/SRAmetadb.sqlite"

} else {

sqlfile <- 'SRAmetadb.sqlite'

}

sra_con <- dbConnect(SQLite(),sqlfile)

fs <- listSRAfile("SRP031476", sra_con, fileType = "sra" ) # 38 files

# getSRAfile("SRP031476", sra_con, fileType = "sra" ) # starting to download

getSRAfile(fs$run[13], sra_con, fileType='sra') # SRR1012917.sra

getSRAfile(fs$run[30], sra_con, fileType='sra') # SRR1012918.sra

dbDisconnect( sra_con )

fs <- dir(pattern='sra')

# for(f in fs) system(paste("fastq-dump --split-3", f)) # Single thread, Not efficient

scrp <- paste("/opt/RNA-Seq/bin/sratoolkit.2.3.5-2-ubuntu64/bin/fastq-dump --split-3", fs)

library(parallel)

cl <- makeCluster(getOption("cl.cores", 5))

# note that if something is wrong, we need to delete broken fastq first and then run again.

system.time(clusterApply(cl, scrp, function(x) system(x)))

stopCluster(cl)

# Step 2. Create samples object

samples <- data.frame(LibraryName = c(paste("Ctrl_Rep", 7:1, sep=''),

paste("E2_Rep", 7:1, sep='')),

LibraryLayout = rep("SINGLE", 14),

fastq1=c(paste(paste("SRR", 1012952:1012954, ".fastq", sep=''), collapse=','),

paste(paste("SRR", 1012949:1012951, ".fastq", sep=''), collapse=','),

paste(paste("SRR", 1012946:1012948, ".fastq", sep=''), collapse=','),

paste(paste("SRR", 1012943:1012945, ".fastq", sep=''), collapse=','),

paste(paste("SRR", 1012940:1012942, ".fastq", sep=''), collapse=','),

paste(paste("SRR", 1012937:1012939, ".fastq", sep=''), collapse=','),

paste(paste("SRR", 1012934:1012936, ".fastq", sep=''), collapse=','),

paste(paste("SRR", 1012931:1012933, ".fastq", sep=''), collapse=','),

paste(paste("SRR", 1012928:1012930, ".fastq", sep=''), collapse=','),

paste(paste("SRR", 1012925:1012927, ".fastq", sep=''), collapse=','),

paste(paste("SRR", 1012923:1012924, ".fastq", sep=''), collapse=','),

paste(paste("SRR", 1012921:1012922, ".fastq", sep=''), collapse=','),

paste(paste("SRR", 1012919:1012920, ".fastq", sep=''), collapse=','),

paste(paste("SRR", 1012917:1012918, ".fastq", sep=''), collapse=',')),

fastq2=rep("", 14),

countf=c(paste("Ctrl_Rep", 7:1, ".count", sep=''),

paste("E2_Rep", 7:1, ".count", sep='')))

# Step 3: run tophat2

i <- 10

system.time(paste("tophat2 -G genes.gtf -p 5 -o",

samples$LibraryName[i],

"genome",

samples$fastq1[i]))

# system("tophat2 -G genes.gtf -p 5 -o E2_Rep5 genome SRR1012925.fastq")

# system("tophat2 -G genes.gtf -p 5 -o E2_Rep5 genome SRR1012925.fastq,SRR1012926.fastq,SRR1012927.fastq")

for(i in c(1:14)) {

system(paste("tophat2 -G genes.gtf -p 5 -o", samples$LibraryName[i], "genome", samples$fastq1[i]))

}

# Step 4. Organize, sort and index the BAM files and create SAM files.

for(i in seq_len(nrow(samples)) {

lib = samples$LibraryName[i]

ob = file.path(lib, "accepted_hits.bam")

# sort by name, convert to SAM for htseq-count

c1 <- paste0("samtools sort -n ", ob, " ", lib, "_sn")

c2 <- paste0("samtools view -o ", lib, "_sn.sam ", lib, "_sn.bam")

system(c1)

system(c2)

}

samtools sort -n Sample1/accepted_hits.bam Sample1_sn # 283 seconds

samtools view -o Sample1_sn.sam Sample1_sn.bam # 38 seconds

# samtools sort Sample1/accepted_hits.bam Sample1_s

# samtools index Sample1_s.bam

# Step 5. Count reads using htseq-count

htseq-count -s no -a 10 Sample1_sn.sam Mus_musculus.GRCm38.70.gtf > Sample1.count # 759 seconds

htseq-count -s no -a 10 Sample2_sn.sam Mus_musculus.GRCm38.70.gtf > Sample2.count

samples$countsf <- paste(samples$LibraryName, "count", sep=".")

gf <- "genes.gtf"

cmd <- paste0("htseq-count -s no -a 10 ", samples$LibraryName, "_sn.sam ", gf, " > ", samples$countf)

system.time(clusterApply(cl, cmd, function(x) system(x))) # 119 minutes

samplesDESeq = data.frame(shortname = c("Sample1", "Sample2"), countf=c("Sample1.count", "Sample2.count"),

condition = c("CTL", "KD"), LibraryLayout =c("SINGLE", "SINGLE"))

library("DESeq")

cds = newCountDataSetFromHTSeqCount(samplesDESeq)

cds = estimateSizeFactors(cds)

sizeFactors(cds)

GSE37544

GSE11045

Probe Region Expression Estimation for RNA-Seq Data for Improved Microarray Comparability

GSE37704

http://www.gettinggeneticsdone.com/2015/12/tutorial-rna-seq-differential.html

GSE64570, GSE69244, GSE72165

Differential analyses for RNA-seq: transcript-level estimates improve gene-level inferences

SRA

SRA000299

Note that one fastq file can represent several channels/lanes. The lane information can be extracted from fastq definition line (first line of each read). For example,

@SRR002321.1 080226_CMLIVERKIDNEY_0007:2:1:115:885

"SRR002321.1" is the short read archive name, where the .1 is the read number, "080226_CMLIVERKIDNEY_0007" should be the Machine name, which has been arbitrarily changed

- "2" is the Channel/lane number

- "1" is the Tile number

- "115" is the X position

- "885" is the Y position

The website also shows how to extract reads with the same run from fastq files.

In this example, there are

- two full sequencing runs (this info can be obtained from paper)

- 4 experiments (SRX000571, SRX000604, SRX000605, SRX000606)

- 6 runs (SRRR002321, SRR002323, SRR002322, SRR002320, SRR002325, SRR002324)

- 7 lanes (eg "080226_CMLIVERKIDNEY_0007:2") for each full sequencing run

SRA data linked from QuickNGS

Include Chip-Seq, RNA-Seq, WGS and miRNA-Seq. QuickNGS is a production environment for quick batch-wise analysis of Next-Generation Sequencing (NGS) data in high-throughput laboratories.

European Nucleotide Archive/ENA

DDBJ

ENCODE

http://www.ncbi.nlm.nih.gov/geo/info/ENCODE.html has a list of data with GSE number.

1000 genomes

Human BodyMap 2.0 data from Illumina

- http://www.ensembl.info/blog/2011/05/24/human-bodymap-2-0-data-from-illumina/

- https://usegalaxy.org/u/jeremy/p/galaxy-rna-seq-analysis-exercise. Galaxy needs to install hg19 genome before we can use tophat or bowtie. See Galaxy/Wiki/Admin/Data Preparation page. Genome can be downloaded from tophat webpage. It is 20GB for hg19! We can also download just chromosome 19 fasta file chr19.fa.gz from UCSC genomes FTP server and it is only 19MB. We can then create reference index files (.bt2) by using bowtie2-build command. If we use Galaxy, we don't need to create reference index files.

- Impact of gene annotation on RNA-Seq data analysis

TCGA/The Cancer Genome Atlas

- Broad Institute TCGA GDAC

- https://wiki.nci.nih.gov/display/TCGA/RNASeq

- https://www.biostars.org/p/128132/

- RTCGA package from Bioconductor.

The TCGA Data Portal does not host lower levels of sequence data. NCI's Cancer Genomics Hub (CGHub) is the new secure repository for storing, cataloging, and accessing BAM files and metadata for sequencing data (need to 1. register from HHS to get an access 2. request access to the secure data set).

UCI

- Machine learning repository (not RNA-Seq)

ReCount

- ReCount: A multi-experiment resource of analysis-ready RNA-seq gene count datasets

- http://bowtie-bio.sourceforge.net/recount/

- Two datasets were used in Getting the most out of RNA-seq data analysis

Other RNA-Seq tools

http://en.wikipedia.org/wiki/List_of_RNA-Seq_bioinformatics_tools

Galaxy

The first 3 lines will install galaxy on local computer. The last command will start Galaxy. PS. It will take a while to start the first time.

hg clone https://bitbucket.org/galaxy/galaxy-dist/ cd galaxy-dist hg update stable sh run.sh

A screenshot of the available tools is given below. Strangely, I did not find "NGS: RNA Analysis" in the NGS: ToolBox on left hand side.

We cannot upload > 2GB fastq data. For such large dataset (we have one 3.3 GB fastq in this case), Galaxy asks to use http or ftp to load the data. I install Apache in Ubuntu and put this fastq file there for upload.

Galaxy does not include bowtie, tophat, .... by default. See this post and Galaxy wiki about the installation. Another option is to modify line 614 of 'universe_wsgl.ini' file and enable ourselves as adminstrator. Also modify line 146 to add a path for installing tools through Galaxy Admin page.

If you submit a lot of jobs (eg each tophat2 generates 5 jobs) some of jobs cannot be run immediately. In my experience, there is a 25 jobs cap. Running jobs has a yellow background color. Waiting for run jobs has a gray background color.