R: Difference between revisions

| Line 1: | Line 1: | ||

= Install and upgrade R = | |||

[[Install_R|Here]] | [[Install_R|Here]] | ||

== Online Editor | == New release == | ||

* R 4.4.0 | |||

** [https://www.r-bloggers.com/2024/04/whats-new-in-r-4-4-0/ What’s new in R 4.4.0?] | |||

** [https://www.r-bloggers.com/2024/05/cve-2024-27322-should-never-have-been-assigned-and-r-data-files-are-still-super-risky-even-in-r-4-4-0/ CVE-2024-27322 Should Never Have Been Assigned And R Data Files Are Still Super Risky Even In R 4.4.0], [https://www.ithome.com.tw/news/162626 程式開發語言R爆有程式碼執行漏洞,可用於供應鏈攻擊], [https://www.bleepingcomputer.com/news/security/r-language-flaw-allows-code-execution-via-rds-rdx-files/ R language flaw allows code execution via RDS/RDX files], [https://www.r-bloggers.com/2024/05/a-security-issue-with-r-serialization/ A security issue with R serialization] and the [https://cran.r-project.org/web/packages/RAppArmor/index.html RAppArmor] Package. | |||

* R 4.3.0 | |||

** [https://www.jumpingrivers.com/blog/whats-new-r43/ What's new in R 4.3.0?] | |||

** Extracting from a pipe. The underscore _ can be used to refer to the final value from a pipeline <code style="display:inline-block;">mtcars |> lm(mpg ~ disp, data = _) |> _$coef</code>. Previously we need to use [https://stackoverflow.com/a/56038303 this way] or [https://stackoverflow.com/a/60873298 this way]. If we want to apply some (anonymous) function to each element of a list, use '''map(), map_dbl()''' from the [https://purrr.tidyverse.org/ purrr]. | |||

* R 4.2.0 | |||

** Calling if() or while() with a condition of length greater than one gives an error rather than a warning. | |||

** [https://twitter.com/henrikbengtsson/status/1501306369319735300 use underscore (_) as a placeholder on the right-hand side (RHS) of a forward pipe]. For example, '''mtcars |> subset(cyl == 4) |> lm(mpg ~ disp, data = _) ''' | |||

** [https://developer.r-project.org/Blog/public/2022/04/08/enhancements-to-html-documentation/ Enhancements to HTML Documentation] | |||

** [https://www.jumpingrivers.com/blog/new-features-r420/ New features in R 4.2.0] | |||

* R 4.1.0 | |||

** [https://developer.r-project.org/blosxom.cgi/R-devel/2021/01/13#n2021-01-13 pipe and shorthand for creating a function] | |||

** [https://www.jumpingrivers.com/blog/new-features-r410-pipe-anonymous-functions/ New features in R 4.1.0] '''anonymous functions''' (lambda function) | |||

* R 4.0.0 | |||

** [https://blog.revolutionanalytics.com/2020/04/r-400-is-released.html R 4.0.0 now available, and a look back at R's history] | |||

** [https://www.infoworld.com/article/3540989/major-r-language-update-brings-big-changes.html R 4.0.0 brings numerous and significant changes to syntax, strings, reference counting, grid units, and more], [https://www.infoworld.com/article/3541368/how-to-run-r-40-in-docker-and-3-cool-new-r-40-features.html R 4.0: 3 new features] | |||

**# factor is not default in data frame for character vector | |||

**# palette() function has a new default set of colours, and [[R#New_palette_in_R_4.0.0|palette.colors() & palette.pals()]] are new | |||

**# r"(YourString)" for ''raw'' character constants. See ?Quotes | |||

* R 3.6.0 | |||

** [https://blog.revolutionanalytics.com/2019/05/whats-new-in-r-360.html What's new in R 3.6.0] | |||

*** Changes to random number generation | |||

*** More functions now support vectors with more than 2 billion elements | |||

* R 3.5.0 | |||

** [https://community.rstudio.com/t/error-listing-packages-error-in-readrds-pfile-cannot-read-workspace-version-3-written-by-r-3-6-0/40570/2 The default serialization format for R changed in May 2018, such that new default format (version 3) for workspaces saved can no longer be read by versions of R older than 3.5] | |||

= Online Editor = | |||

We can run R on web browsers without installing it on local machines (similar to [/ideone.com Ideone.com] for C++. It does not require an account either (cf RStudio). | We can run R on web browsers without installing it on local machines (similar to [/ideone.com Ideone.com] for C++. It does not require an account either (cf RStudio). | ||

== [https://rdrr.io/snippets/ rdrr.io] == | |||

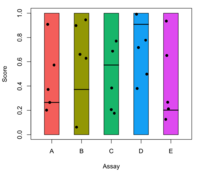

It can produce graphics too. The package I am testing ([https://www.rdocumentation.org/packages/cobs/versions/1.3-3/topics/cobs cobs]) is available too. | It can produce graphics too. The package I am testing ([https://www.rdocumentation.org/packages/cobs/versions/1.3-3/topics/cobs cobs]) is available too. | ||

== rstudio.cloud == | |||

== [https://www.rdocumentation.org/ RDocumentation] == | |||

The interactive engine is based on [https://github.com/datacamp/datacamp-light DataCamp Light] | The interactive engine is based on [https://github.com/datacamp/datacamp-light DataCamp Light] | ||

| Line 21: | Line 49: | ||

The packages on RDocumentation may be outdated. For example, the current stringr on CRAN is v1.2.0 (2/18/2017) but RDocumentation has v1.1.0 (8/19/2016). | The packages on RDocumentation may be outdated. For example, the current stringr on CRAN is v1.2.0 (2/18/2017) but RDocumentation has v1.1.0 (8/19/2016). | ||

= Web Applications = | |||

[[R_web|R web applications]] | |||

= Creating local repository for CRAN and Bioconductor = | |||

[[R_repository|R repository]] | |||

= | = Parallel Computing = | ||

See [[R_parallel|R parallel]]. | |||

== | = Cloud Computing = | ||

== Install R on Amazon EC2 == | |||

http://randyzwitch.com/r-amazon-ec2/ | |||

== | == Bioconductor on Amazon EC2 == | ||

http://www.bioconductor.org/help/bioconductor-cloud-ami/ | |||

== | = Big Data Analysis = | ||

https:// | * [https://cran.r-project.org/web/views/HighPerformanceComputing.html CRAN Task View: High-Performance and Parallel Computing with R] | ||

* [http://www.xmind.net/m/LKF2/ R for big data] in one picture | |||

* [https://rstudio-pubs-static.s3.amazonaws.com/72295_692737b667614d369bd87cb0f51c9a4b.html Handling large data sets in R] | |||

* [https://www.oreilly.com/library/view/big-data-analytics/9781786466457/#toc-start Big Data Analytics with R] by Simon Walkowiak | |||

* [https://pbdr.org/publications.html pbdR] | |||

** https://en.wikipedia.org/wiki/Programming_with_Big_Data_in_R | |||

** [https://olcf.ornl.gov/wp-content/uploads/2016/01/pbdr.pdf Programming with Big Data in R - pbdR] George Ostrouchov and Mike Matheson Oak Ridge National Laboratory | |||

=== | == bigmemory, biganalytics, bigtabulate == | ||

== | == ff, ffbase == | ||

* | * tapply does not work. [https://stackoverflow.com/questions/16470677/using-tapply-ave-functions-for-ff-vectors-in-r Using tapply, ave functions for ff vectors in R] | ||

* [ | * [http://www.bnosac.be/index.php/blog/12-popularity-bigdata-large-data-packages-in-r-and-ffbase-user-presentation Popularity bigdata / large data packages in R and ffbase useR presentation] | ||

* | * [http://www.bnosac.be/images/bnosac/blog/user2013_presentation_ffbase.pdf ffbase: statistical functions for large datasets] in useR 2013 | ||

* https://www. | * [https://www.rdocumentation.org/packages/ffbase/versions/0.12.7/topics/ffbase-package ffbase] package | ||

== biglm == | |||

== data.table == | |||

See [[Tidyverse#data.table|data.table]]. | |||

== disk.frame == | |||

[https://www.brodrigues.co/blog/2019-10-05-parallel_maxlik/ Split-apply-combine for Maximum Likelihood Estimation of a linear model] | |||

== Apache arrow == | |||

* | * https://arrow.apache.org/docs/r/ | ||

* [https://www.infoworld.com/article/3637038/the-best-open-source-software-of-2021.html#slide17 The best open source software of 2021] | |||

= Reproducible Research = | |||

* http://cran.r-project.org/web/views/ReproducibleResearch.html | |||

* [[Reproducible|Reproducible]] | |||

== Reproducible Environments == | |||

https://rviews.rstudio.com/2019/04/22/reproducible-environments/ | |||

== checkpoint package == | |||

* https://cran.r-project.org/web/packages/checkpoint/index.html | |||

* [https://timogrossenbacher.ch/2017/07/a-truly-reproducible-r-workflow/ A (truly) reproducible R workflow] | |||

== Some lessons in R coding == | |||

# don't use rand() and srand() in c. The result is platform dependent. My experience is Ubuntu/Debian/CentOS give the same result but they are different from macOS and Windows. Use [[Rcpp|Rcpp]] package and R's random number generator instead. | |||

# don't use [https://www.rdocumentation.org/packages/base/versions/3.6.1/topics/list.files list.files()] directly. The result is platform dependent even different Linux OS. An extra [https://www.rdocumentation.org/packages/base/versions/3.6.1/topics/sort sorting] helps! | |||

== | = Useful R packages = | ||

* [https://support.rstudio.com/hc/en-us/articles/201057987-Quick-list-of-useful-R-packages Quick list of useful R packages] | |||

* [https://github.com/qinwf/awesome-R awesome-R] | |||

* [https://stevenmortimer.com/one-r-package-a-day/ One R package a day] | |||

== Rcpp == | |||

http://cran.r-project.org/web/packages/Rcpp/index.html. See more [[Rcpp|here]]. | |||

== RInside : embed R in C++ code == | |||

* http:// | * http://dirk.eddelbuettel.com/code/rinside.html | ||

* http://dirk.eddelbuettel.com/papers/rfinance2010_rcpp_rinside_tutorial_handout.pdf | |||

* http:// | |||

=== Ubuntu === | |||

With RInside, R can be embedded in a graphical application. For example, $HOME/R/x86_64-pc-linux-gnu-library/3.0/RInside/examples/qt directory includes source code of a Qt application to show a kernel density plot with various options like kernel functions, bandwidth and an R command text box to generate the random data. See my demo on [http://www.youtube.com/watch?v=UQ8yKQcPTg0 Youtube]. I have tested this '''qtdensity''' example successfully using Qt 4.8.5. | |||

# Follow the instruction [[#cairoDevice|cairoDevice]] to install required libraries for cairoDevice package and then cairoDevice itself. | |||

# Install [[Qt|Qt]]. Check 'qmake' command becomes available by typing 'whereis qmake' or 'which qmake' in terminal. | |||

# Open Qt Creator from Ubuntu start menu/Launcher. Open the project file $HOME/R/x86_64-pc-linux-gnu-library/3.0/RInside/examples/qt/qtdensity.pro in Qt Creator. | |||

# Under Qt Creator, hit 'Ctrl + R' or the big green triangle button on the lower-left corner to build/run the project. If everything works well, you shall see the ''interactive'' program qtdensity appears on your desktop. | |||

[[:File:qtdensity.png]] | |||

With RInside + [http://www.webtoolkit.eu/wt Wt web toolkit] installed, we can also create a web application. To demonstrate the example in ''examples/wt'' directory, we can do | |||

<pre> | <pre> | ||

cd ~/R/x86_64-pc-linux-gnu-library/3.0/RInside/examples/wt | |||

make | |||

sudo ./wtdensity --docroot . --http-address localhost --http-port 8080 | |||

</pre> | </pre> | ||

Then we can go to the browser's address bar and type ''http://localhost:8080'' to see how it works (a screenshot is in [http://dirk.eddelbuettel.com/blog/2011/11/30/ here]). | |||

=== Windows 7 === | |||

To make RInside works on Windows OS, try the following | |||

# Make sure R is installed under '''C:\''' instead of '''C:\Program Files''' if we don't want to get an error like ''g++.exe: error: Files/R/R-3.0.1/library/RInside/include: No such file or directory''. | |||

# Install RTools | |||

# Instal RInside package from source (the binary version will give an [http://stackoverflow.com/questions/13137770/fatal-error-unable-to-open-the-base-package error ]) | |||

# Create a DOS batch file containing necessary paths in PATH environment variable | |||

<pre> | <pre> | ||

@echo off | |||

set PATH=C:\Rtools\bin;c:\Rtools\gcc-4.6.3\bin;%PATH% | |||

`` | set PATH=C:\R\R-3.0.1\bin\i386;%PATH% | ||

set PKG_LIBS=`Rscript -e "Rcpp:::LdFlags()"` | |||

set PKG_CPPFLAGS=`Rscript -e "Rcpp:::CxxFlags()"` | |||

set R_HOME=C:\R\R-3.0.1 | |||

echo Setting environment for using R | |||

cmd | |||

</pre> | |||

In the Windows command prompt, run | |||

<pre> | |||

cd C:\R\R-3.0.1\library\RInside\examples\standard | |||

make -f Makefile.win | |||

</pre> | |||

Now we can test by running any of executable files that '''make''' generates. For example, ''rinside_sample0''. | |||

<pre> | |||

rinside_sample0 | |||

</pre> | </pre> | ||

As for the Qt application qdensity program, we need to make sure the same version of MinGW was used in building RInside/Rcpp and Qt. See some discussions in | |||

* http://stackoverflow.com/questions/12280707/using-rinside-with-qt-in-windows | |||

* | * http://www.mail-archive.com/rcpp-devel@lists.r-forge.r-project.org/msg04377.html | ||

So the Qt and Wt web tool applications on Windows may or may not be possible. | |||

* http://www. | |||

* [https:// | == GUI == | ||

* | === Qt and R === | ||

* http://cran.r-project.org/web/packages/qtbase/index.html [https://stat.ethz.ch/pipermail/r-devel/2015-July/071495.html QtDesigner is such a tool, and its output is compatible with the qtbase R package] | |||

* http://qtinterfaces.r-forge.r-project.org | |||

==== | == tkrplot == | ||

On Ubuntu, we need to install tk packages, such as by | |||

<pre> | |||

sudo apt-get install tk-dev | |||

</pre> | |||

== | == reticulate - Interface to 'Python' == | ||

[[Python#R_and_Python:_reticulate_package|Python -> reticulate]] | |||

== | == Hadoop (eg ~100 terabytes) == | ||

[ | See also [http://cran.r-project.org/web/views/HighPerformanceComputing.html HighPerformanceComputing] | ||

= | * RHadoop | ||

https:// | * Hive | ||

* [http://cran.r-project.org/web/packages/mapReduce/ MapReduce]. Introduction by [http://www.linuxjournal.com/content/introduction-mapreduce-hadoop-linux Linux Journal]. | |||

* http://www.techspritz.com/category/tutorials/hadoopmapredcue/ Single node or multinode cluster setup using Ubuntu with VirtualBox (Excellent) | |||

* [http://www.michael-noll.com/tutorials/running-hadoop-on-ubuntu-linux-single-node-cluster/ Running Hadoop on Ubuntu Linux (Single-Node Cluster)] | |||

* Ubuntu 12.04 http://www.youtube.com/watch?v=WN2tJk_oL6E and [https://www.dropbox.com/s/05aurcp42asuktp/Chiu%20Hadoop%20Pig%20Install%20Instructions.docx instruction] | |||

* Linux Mint http://blog.hackedexistence.com/installing-hadoop-single-node-on-linux-mint | |||

* http://www.r-bloggers.com/search/hadoop | |||

=== | === [https://github.com/RevolutionAnalytics/RHadoop/wiki RHadoop] === | ||

* http:// | * [http://www.rdatamining.com/tutorials/r-hadoop-setup-guide RDataMining.com] based on Mac. | ||

* Ubuntu 12.04 - [http://crishantha.com/wp/?p=1414 Crishantha.com], [http://nikhilshah123sh.blogspot.com/2014/03/setting-up-rhadoop-in-ubuntu-1204.html nikhilshah123sh.blogspot.com].[http://bighadoop.wordpress.com/2013/02/25/r-and-hadoop-data-analysis-rhadoop/ Bighadoop.wordpress] contains an example. | |||

* RapReduce in R by [https://github.com/RevolutionAnalytics/rmr2/blob/master/docs/tutorial.md RevolutionAnalytics] with a few examples. | |||

* https://twitter.com/hashtag/rhadoop | |||

* [http://bigd8ta.com/step-by-step-guide-to-setting-up-an-r-hadoop-system/ Bigd8ta.com] based on Ubuntu 14.04. | |||

=== | === Snowdoop: an alternative to MapReduce algorithm === | ||

* http://matloff.wordpress.com/2014/11/26/how-about-a-snowdoop-package/ | |||

* http://matloff.wordpress.com/2014/12/26/snowdooppartools-update/comment-page-1/#comment-665 | |||

== | == [http://cran.r-project.org/web/packages/XML/index.html XML] == | ||

http:// | On Ubuntu, we need to install libxml2-dev before we can install XML package. | ||

<pre> | |||

sudo apt-get update | |||

sudo apt-get install libxml2-dev | |||

</pre> | |||

On CentOS, | |||

<pre> | |||

yum -y install libxml2 libxml2-devel | |||

</pre> | |||

=== | === XML === | ||

* http://giventhedata.blogspot.com/2012/06/r-and-web-for-beginners-part-ii-xml-in.html. It gave an example of extracting the XML-values from each XML-tag for all nodes and save them in a data frame using '''xmlSApply()'''. | |||

* http://www.quantumforest.com/2011/10/reading-html-pages-in-r-for-text-processing/ | |||

* https://tonybreyal.wordpress.com/2011/11/18/htmltotext-extracting-text-from-html-via-xpath/ | |||

* https://www.tutorialspoint.com/r/r_xml_files.htm | |||

* https://www.datacamp.com/community/tutorials/r-data-import-tutorial#xml | |||

* [http://www.stat.berkeley.edu/~statcur/Workshop2/Presentations/XML.pdf Extracting data from XML] PubMed and Zillow are used to illustrate. xmlTreeParse(), xmlRoot(), xmlName() and xmlSApply(). | |||

* https://yihui.name/en/2010/10/grabbing-tables-in-webpages-using-the-xml-package/ | |||

{{Pre}} | |||

library(XML) | |||

# Read and parse HTML file | |||

doc.html = htmlTreeParse('http://apiolaza.net/babel.html', useInternal = TRUE) | |||

# Extract all the paragraphs (HTML tag is p, starting at | |||

'' | # the root of the document). Unlist flattens the list to | ||

# create a character vector. | |||

doc.text = unlist(xpathApply(doc.html, '//p', xmlValue)) | |||

# Replace all by spaces | |||

doc.text = gsub('\n', ' ', doc.text) | |||

# Join all the elements of the character vector into a single | |||

# character string, separated by spaces | |||

doc.text = paste(doc.text, collapse = ' ') | |||

</pre> | |||

This post http://stackoverflow.com/questions/25315381/using-xpathsapply-to-scrape-xml-attributes-in-r can be used to monitor new releases from github.com. | |||

{{Pre}} | |||

> library(RCurl) # getURL() | |||

> library(XML) # htmlParse and xpathSApply | |||

> xData <- getURL("https://github.com/alexdobin/STAR/releases") | |||

> doc = htmlParse(xData) | |||

> plain.text <- xpathSApply(doc, "//span[@class='css-truncate-target']", xmlValue) | |||

# I look at the source code and search 2.5.3a and find the tag as | |||

# <span class="css-truncate-target">2.5.3a</span> | |||

> plain.text | |||

[1] "2.5.3a" "2.5.2b" "2.5.2a" "2.5.1b" "2.5.1a" | |||

[6] "2.5.0c" "2.5.0b" "STAR_2.5.0a" "STAR_2.4.2a" "STAR_2.4.1d" | |||

> | |||

> # try bwa | |||

> > xData <- getURL("https://github.com/lh3/bwa/releases") | |||

> doc = htmlParse(xData) | |||

> xpathSApply(doc, "//span[@class='css-truncate-target']", xmlValue) | |||

[1] "v0.7.15" "v0.7.13" | |||

> # try picard | |||

> xData <- getURL("https://github.com/broadinstitute/picard/releases") | |||

> doc = htmlParse(xData) | |||

> xpathSApply(doc, "//span[@class='css-truncate-target']", xmlValue) | |||

[1] "2.9.1" "2.9.0" "2.8.3" "2.8.2" "2.8.1" "2.8.0" "2.7.2" "2.7.1" "2.7.0" | |||

[10] "2.6.0" | |||

</pre> | |||

This method can be used to monitor new tags/releases from some projects like [https://github.com/Ultimaker/Cura/releases Cura], BWA, Picard, [https://github.com/alexdobin/STAR/releases STAR]. But for some projects like [https://github.com/ncbi/sra-tools sratools] the '''class''' attribute in the '''span''' element ("css-truncate-target") can be different (such as "tag-name"). | |||

* | === xmlview === | ||

* http://rud.is/b/2016/01/13/cobble-xpath-interactively-with-the-xmlview-package/ | |||

== RCurl == | |||

On Ubuntu, we need to install the packages (the first one is for XML package that RCurl suggests) | |||

{{Pre}} | |||

# Test on Ubuntu 14.04 | |||

sudo apt-get install libxml2-dev | |||

sudo apt-get install libcurl4-openssl-dev | |||

</pre> | |||

=== Scrape google scholar results === | |||

https://github.com/tonybreyal/Blog-Reference-Functions/blob/master/R/googleScholarXScraper/googleScholarXScraper.R | |||

No google ID is required | |||

Seems not work | |||

<pre> | <pre> | ||

Error in data.frame(footer = xpathLVApply(doc, xpath.base, "/font/span[@class='gs_fl']", : | |||

arguments imply differing number of rows: 2, 0 | |||

</pre> | </pre> | ||

=== [https://cran.r-project.org/web/packages/devtools/index.html devtools] === | |||

'''devtools''' package depends on Curl. It actually depends on some system files. If we just need to install a package, consider the [[#remotes|remotes]] package which was suggested by the [https://cran.r-project.org/web/packages/BiocManager/index.html BiocManager] package. | |||

{{Pre}} | |||

# Ubuntu 14.04 | |||

sudo apt-get install libcurl4-openssl-dev | |||

# Ubuntu 16.04, 18.04 | |||

sudo apt-get install build-essential libcurl4-gnutls-dev libxml2-dev libssl-dev | |||

# Ubuntu 20.04 | |||

sudo apt-get install -y libxml2-dev libcurl4-openssl-dev libssl-dev | |||

</pre> | </pre> | ||

[https://github.com/wch/movies/issues/3 Lazy-load database XXX is corrupt. internal error -3]. It often happens when you use install_github to install a package that's currently loaded; try restarting R and running the app again. | |||

NB. According to the output of '''apt-cache show r-cran-devtools''', the binary package is very old though '''apt-cache show r-base''' and [https://cran.r-project.org/bin/linux/ubuntu/#supported-packages supported packages] like ''survival'' shows the latest version. | |||

=== | === [https://github.com/hadley/httr httr] === | ||

httr imports curl, jsonlite, mime, openssl and R6 packages. | |||

When I tried to install httr package, I got an error and some message: | |||

<pre> | <pre> | ||

Configuration failed because openssl was not found. Try installing: | |||

* deb: libssl-dev (Debian, Ubuntu, etc) | |||

* rpm: openssl-devel (Fedora, CentOS, RHEL) | |||

* csw: libssl_dev (Solaris) | |||

* brew: openssl (Mac OSX) | |||

If openssl is already installed, check that 'pkg-config' is in your | |||

PATH and PKG_CONFIG_PATH contains a openssl.pc file. If pkg-config | |||

is unavailable you can set INCLUDE_DIR and LIB_DIR manually via: | |||

R CMD INSTALL --configure-vars='INCLUDE_DIR=... LIB_DIR=...' | |||

If | -------------------------------------------------------------------- | ||

ERROR: configuration failed for package ‘openssl’ | |||

If | |||

</pre> | </pre> | ||

It turns out after I run '''sudo apt-get install libssl-dev''' in the terminal (Debian), it would go smoothly with installing httr package. Nice httr! | |||

Real example: see [http://stackoverflow.com/questions/27371372/httr-retrieving-data-with-post this post]. Unfortunately I did not get a table result; I only get an html file (R 3.2.5, httr 1.1.0 on Ubuntu and Debian). | |||

Since httr package was used in many other packages, take a look at how others use it. For example, [https://github.com/ropensci/aRxiv aRxiv] package. | |||

[https://www.statsandr.com/blog/a-package-to-download-free-springer-books-during-covid-19-quarantine/ A package to download free Springer books during Covid-19 quarantine], [https://www.radmuzom.com/2020/05/03/an-update-to-an-adventure-in-downloading-books/ An update to "An adventure in downloading books"] (rvest package) | |||

=== [ | === [http://cran.r-project.org/web/packages/curl/ curl] === | ||

curl is independent of RCurl package. | |||

* http://cran.r-project.org/web/packages/curl/vignettes/intro.html | |||

* https://www.opencpu.org/posts/curl-release-0-8/ | |||

* | |||

* | |||

== | {{Pre}} | ||

library(curl) | |||

h <- new_handle() | |||

handle_setform(h, | |||

name="aaa", email="bbb" | |||

) | |||

req <- curl_fetch_memory("http://localhost/d/phpmyql3_scripts/ch02/form2.html", handle = h) | |||

rawToChar(req$content) | |||

</pre> | |||

=== [http://ropensci.org/packages/index.html rOpenSci] packages === | |||

'''rOpenSci''' contains packages that allow access to data repositories through the R statistical programming environment | |||

== [https://cran.r-project.org/web/packages/remotes/index.html remotes] == | |||

Download and install R packages stored in 'GitHub', 'BitBucket', or plain 'subversion' or 'git' repositories. This package is a lightweight replacement of the 'install_*' functions in 'devtools'. Also remotes does not require any extra OS level library (at least on Ubuntu 16.04). | |||

Example: | |||

{{Pre}} | |||

# https://github.com/henrikbengtsson/matrixstats | |||

remotes::install_github('HenrikBengtsson/matrixStats@develop') | |||

</pre> | |||

== DirichletMultinomial == | |||

On Ubuntu, we do | |||

<pre> | |||

sudo apt-get install libgsl0-dev | |||

</pre> | |||

=== [http://cran.r-project.org/web/packages/ | == Create GUI == | ||

=== [http://cran.r-project.org/web/packages/gWidgets/index.html gWidgets] === | |||

== [http://cran.r-project.org/web/packages/GenOrd/index.html GenOrd]: Generate ordinal and discrete variables with given correlation matrix and marginal distributions == | |||

[http://statistical-research.com/simulating-random-multivariate-correlated-data-categorical-variables/?utm_source=rss&utm_medium=rss&utm_campaign=simulating-random-multivariate-correlated-data-categorical-variables here] | |||

[ | == json == | ||

[[R_web#json|R web -> json]] | |||

== Map == | |||

=== [https://rstudio.github.io/leaflet/ leaflet] === | |||

* rstudio.github.io/leaflet/#installation-and-use | |||

* https://metvurst.wordpress.com/2015/07/24/mapview-basic-interactive-viewing-of-spatial-data-in-r-6/ | |||

=== choroplethr === | |||

* http://blog.revolutionanalytics.com/2014/01/easy-data-maps-with-r-the-choroplethr-package-.html | |||

* http://www.arilamstein.com/blog/2015/06/25/learn-to-map-census-data-in-r/ | |||

* http://www.arilamstein.com/blog/2015/09/10/user-question-how-to-add-a-state-border-to-a-zip-code-map/ | |||

=== ggplot2 === | |||

[https://randomjohn.github.io/r-maps-with-census-data/ How to make maps with Census data in R] | |||

== [http://cran.r-project.org/web/packages/googleVis/index.html googleVis] == | |||

See an example from [[R#RJSONIO|RJSONIO]] above. | |||

-- | == [https://cran.r-project.org/web/packages/googleAuthR/index.html googleAuthR] == | ||

Create R functions that interact with OAuth2 Google APIs easily, with auto-refresh and Shiny compatibility. | |||

== gtrendsR - Google Trends == | |||

* [http://blog.revolutionanalytics.com/2015/12/download-and-plot-google-trends-data-with-r.html Download and plot Google Trends data with R] | |||

* [https://datascienceplus.com/analyzing-google-trends-data-in-r/ Analyzing Google Trends Data in R] | |||

* [https://trends.google.com/trends/explore?date=2004-01-01%202017-09-04&q=microarray%20analysis microarray analysis] from 2004-04-01 | |||

* [https://trends.google.com/trends/explore?date=2004-01-01%202017-09-04&q=ngs%20next%20generation%20sequencing ngs next generation sequencing] from 2004-04-01 | |||

* [https://trends.google.com/trends/explore?date=2004-01-01%202017-09-04&q=dna%20sequencing dna sequencing] from 2004-01-01. | |||

* [https://trends.google.com/trends/explore?date=2004-01-01%202017-09-04&q=rna%20sequencing rna sequencing] from 2004-01-01. It can be seen RNA sequencing >> DNA sequencing. | |||

* [http://www.kdnuggets.com/2017/09/python-vs-r-data-science-machine-learning.html?utm_content=buffere1df7&utm_medium=social&utm_source=twitter.com&utm_campaign=buffer Python vs R – Who Is Really Ahead in Data Science, Machine Learning?] and [https://stackoverflow.blog/2017/09/06/incredible-growth-python/ The Incredible Growth of Python] by [https://twitter.com/drob?lang=en David Robinson] | |||

== quantmod == | |||

[http://www.thertrader.com/2015/12/13/maintaining-a-database-of-price-files-in-r/ Maintaining a database of price files in R]. It consists of 3 steps. | |||

# Initial data downloading | |||

# Update existing data | |||

# Create a batch file | |||

== [http://cran.r-project.org/web/packages/caret/index.html caret] == | |||

* http://topepo.github.io/caret/index.html & https://github.com/topepo/caret/ | |||

* https://www.r-project.org/conferences/useR-2013/Tutorials/kuhn/user_caret_2up.pdf | |||

* https://github.com/cran/caret source code mirrored on github | |||

* Cheatsheet https://www.rstudio.com/resources/cheatsheets/ | |||

* [https://daviddalpiaz.github.io/r4sl/the-caret-package.html Chapter 21 of "R for Statistical Learning"] | |||

== Tool for connecting Excel with R == | |||

* https://bert-toolkit.com/ | |||

* [http://www.thertrader.com/2016/11/30/bert-a-newcomer-in-the-r-excel-connection/ BERT: a newcomer in the R Excel connection] | |||

* http://blog.revolutionanalytics.com/2018/08/how-to-use-r-with-excel.html | |||

== write.table == | |||

=== Output a named vector === | |||

<pre> | |||

vec <- c(a = 1, b = 2, c = 3) | |||

write.csv(vec, file = "my_file.csv", quote = F) | |||

x = read.csv("my_file.csv", row.names = 1) | |||

vec2 <- x[, 1] | |||

names(vec2) <- rownames(x) | |||

all.equal(vec, vec2) | |||

# | # one liner: row names of a 'matrix' become the names of a vector | ||

vec3 <- as.matrix(read.csv('my_file.csv', row.names = 1))[, 1] | |||

all.equal(vec, vec3) | |||

</pre> | |||

=== Avoid leading empty column to header === | |||

[https://stackoverflow.com/a/2478624 write.table writes unwanted leading empty column to header when has rownames] | |||

<pre> | |||

write.table(a, 'a.txt', col.names=NA) | |||

# Or better by | |||

write.table(data.frame("SeqId"=rownames(a), a), "a.txt", row.names=FALSE) | |||

</pre> | </pre> | ||

[ | === Add blank field AND column names in write.table === | ||

* '''write.table'''(, row.names = TRUE) will miss one element on the 1st row when "row.names = TRUE" which is enabled by default. | |||

** Suppose x is (n x 2) | |||

** write.table(x, sep="\t") will generate a file with 2 element on the 1st row | |||

** read.table(file) will return an object with a size (n x 2) | |||

** read.delim(file) and read.delim2(file) will also be correct | |||

* Note that '''write.csv'''() does not have this issue that write.table() has | |||

** Suppose x is (n x 2) | |||

** Suppose we use write.csv(x, file). The csv file will be ((n+1) x 3) b/c the header row. | |||

** If we use read.csv(file), the object is (n x 3). So we need to use '''read.csv(file, row.names = 1)''' | |||

* adding blank field AND column names in write.table(); [https://stackoverflow.com/a/2478624 write.table writes unwanted leading empty column to header when has rownames] | |||

:<syntaxhighlight lang="rsplus"> | |||

write.table(a, 'a.txt', col.names=NA) | |||

</syntaxhighlight> | |||

* '''readr::write_tsv'''() does not include row names in the output file | |||

=== read.delim(, row.names=1) and write.table(, row.names=TRUE) === | |||

[https://www.statology.org/read-delim-in-r/ How to Use read.delim Function in R] | |||

Case 1: no row.names | |||

<pre> | |||

write.table(df, 'my_data.txt', quote=FALSE, sep='\t', row.names=FALSE) | |||

my_df <- read.delim('my_data.txt') # the rownames will be 1, 2, 3, ... | |||

</pre> | |||

Case 2: with row.names. '''Note:''' if we open the text file in Excel, we'll see the 1st row is missing one header at the end. It is actually missing the column name for the 1st column. | |||

<pre> | |||

write.table(df, 'my_data.txt', quote=FALSE, sep='\t', row.names=TRUE) | |||

my_df <- read.delim('my_data.txt') # it will automatically assign the rownames | |||

</pre> | |||

</ | |||

== Read/Write Excel files package == | |||

* http:// | * http://www.milanor.net/blog/?p=779 | ||

* [http:// | * [https://www.displayr.com/how-to-read-an-excel-file-into-r/?utm_medium=Feed&utm_source=Syndication flipAPI]. One useful feature of DownloadXLSX, which is not supported by the readxl package, is that it can read Excel files directly from the URL. | ||

* | * [http://cran.r-project.org/web/packages/xlsx/index.html xlsx]: depends on Java | ||

* [https:// | ** [https://stackoverflow.com/a/17976604 Export both Image and Data from R to an Excel spreadsheet] | ||

* [http://cran.r-project.org/web/packages/openxlsx/index.html openxlsx]: not depend on Java. Depend on zip application. On Windows, it seems to be OK without installing Rtools. But it can not read xls file; it works on xlsx file. | |||

** It can't be used to open .xls or .xlm files. | |||

** When I try the package to read an xlsx file, I got a warning: No data found on worksheet. 6/28/2018 | |||

** [https://fabiomarroni.wordpress.com/2018/08/07/use-r-to-write-multiple-tables-to-a-single-excel-file/ Use R to write multiple tables to a single Excel file] | |||

* [https://github.com/hadley/readxl readxl]: it does not depend on anything although it can only read but not write Excel files. | |||

** It is part of tidyverse package. The [https://readxl.tidyverse.org/index.html readxl] website provides several articles for more examples. | |||

** [https://github.com/rstudio/webinars/tree/master/36-readxl readxl webinar]. | |||

** One advantage of read_excel (as with read_csv in the readr package) is that the data imports into an easy to print object with three attributes a '''tbl_df''', a '''tbl''' and a '''data.frame.''' | |||

** For writing to Excel formats, use writexl or openxlsx package. | |||

:<syntaxhighlight lang='rsplus'> | |||

library(readxl) | |||

read_excel(path, sheet = NULL, range = NULL, col_names = TRUE, | |||

col_types = NULL, na = "", trim_ws = TRUE, skip = 0, n_max = Inf, | |||

guess_max = min(1000, n_max), progress = readxl_progress(), | |||

.name_repair = "unique") | |||

# Example | |||

read_excel(path, range = cell_cols("c:cx"), col_types = "numeric") | |||

</syntaxhighlight> | |||

* [https://ropensci.org/blog/technotes/2017/09/08/writexl-release writexl]: zero dependency xlsx writer for R | |||

:<syntaxhighlight lang='rsplus'> | |||

library(writexl) | |||

mylst <- list(sheet1name = df1, sheet2name = df2) | |||

write_xlsx(mylst, "output.xlsx") | |||

</syntaxhighlight> | |||

For the Chromosome column, integer values becomes strings (but converted to double, so 5 becomes 5.000000) or NA (empty on sheets). | |||

{{Pre}} | |||

> head(read_excel("~/Downloads/BRCA.xls", 4)[ , -9], 3) | |||

UniqueID (Double-click) CloneID UGCluster | |||

1 HK1A1 21652 Hs.445981 | |||

2 HK1A2 22012 Hs.119177 | |||

3 HK1A4 22293 Hs.501376 | |||

Name Symbol EntrezID | |||

1 Catenin (cadherin-associated protein), alpha 1, 102kDa CTNNA1 1495 | |||

2 ADP-ribosylation factor 3 ARF3 377 | |||

3 Uroporphyrinogen III synthase UROS 7390 | |||

Chromosome Cytoband ChimericClusterIDs Filter | |||

1 5.000000 5q31.2 <NA> 1 | |||

2 12.000000 12q13 <NA> 1 | |||

3 <NA> 10q25.2-q26.3 <NA> 1 | |||

</pre> | |||

The hidden worksheets become visible (Not sure what are those first rows mean in the output). | |||

{{Pre}} | |||

> excel_sheets("~/Downloads/BRCA.xls") | |||

DEFINEDNAME: 21 00 00 01 0b 00 00 00 02 00 00 00 00 00 00 0d 3b 01 00 00 00 9a 0c 00 00 1a 00 | |||

DEFINEDNAME: 21 00 00 01 0b 00 00 00 04 00 00 00 00 00 00 0d 3b 03 00 00 00 9b 0c 00 00 0a 00 | |||

DEFINEDNAME: 21 00 00 01 0b 00 00 00 03 00 00 00 00 00 00 0d 3b 02 00 00 00 9a 0c 00 00 06 00 | |||

[1] "Experiment descriptors" "Filtered log ratio" "Gene identifiers" | |||

[4] "Gene annotations" "CollateInfo" "GeneSubsets" | |||

[7] "GeneSubsetsTemp" | |||

</pre> | |||

The Chinese character works too. | |||

{{Pre}} | |||

> read_excel("~/Downloads/testChinese.xlsx", 1) | |||

中文 B C | |||

1 a b c | |||

2 1 2 3 | |||

</pre> | |||

== | To read all worksheets we need a convenient function | ||

{{Pre}} | |||

read_excel_allsheets <- function(filename) { | |||

sheets <- readxl::excel_sheets(filename) | |||

sheets <- sheets[-1] # Skip sheet 1 | |||

x <- lapply(sheets, function(X) readxl::read_excel(filename, sheet = X, col_types = "numeric")) | |||

names(x) <- sheets | |||

x | |||

} | |||

dcfile <- "table0.77_dC_biospear.xlsx" | |||

dc <- read_excel_allsheets(dcfile) | |||

# Each component (eg dc[[1]]) is a tibble. | |||

</pre> | |||

=== [ | === [https://cran.r-project.org/web/packages/readr/ readr] === | ||

Compared to base equivalents like '''read.csv()''', '''readr''' is much faster and gives more convenient output: it never converts strings to factors, can parse date/times, and it doesn’t munge the column names. | |||

[https://blog.rstudio.org/2016/08/05/readr-1-0-0/ 1.0.0] released. [https://www.tidyverse.org/blog/2021/07/readr-2-0-0/ readr 2.0.0] adds built-in support for reading multiple files at once, fast multi-threaded lazy reading and automatic guessing of delimiters among other changes. | |||

Consider a [http://www.cs.utoronto.ca/~juris/data/cmapbatch/instmatx.21.txt text file] where the table (6100 x 22) has duplicated row names and the (1,1) element is empty. The column names are all unique. | |||

* read.delim() will treat the first column as rownames but it does not allow duplicated row names. Even we use row.names=NULL, it still does not read correctly. It does give warnings (EOF within quoted string & number of items read is not a multiple of the number of columns). The dim is 5177 x 22. | |||

* readr::read_delim(Filename, "\t") will miss the last column. The dim is 6100 x 21. | |||

* '''data.table::fread(Filename, sep = "\t")''' will detect the number of column names is less than the number of columns. Added 1 extra default column name for the first column which is guessed to be row names or an index. The dim is 6100 x 22. (Winner!) | |||

The '''readr::read_csv()''' function is as fast as '''data.table::fread()''' function. ''For files beyond 100MB in size fread() and read_csv() can be expected to be around 5 times faster than read.csv().'' See 5.3 of Efficient R Programming book. | |||

Note that '''data.table::fread()''' can read a selection of the columns. | |||

=== | === Speed comparison === | ||

[https:// | [https://predictivehacks.com/the-fastest-way-to-read-and-write-file-in-r/ The Fastest Way To Read And Write Files In R]. data.table >> readr >> base. | ||

== | == [http://cran.r-project.org/web/packages/ggplot2/index.html ggplot2] == | ||

http:// | See [[Ggplot2|ggplot2]] | ||

==== [ | == Data Manipulation & Tidyverse == | ||

See [[Tidyverse|Tidyverse]]. | |||

==== | == Data Science == | ||

See [[Data_science|Data science]] page | |||

==== [https://cran.r-project.org/web/packages/ | == microbenchmark & rbenchmark == | ||

[ | * [https://cran.r-project.org/web/packages/microbenchmark/index.html microbenchmark] | ||

** [https://www.r-bloggers.com/using-the-microbenchmark-package-to-compare-the-execution-time-of-r-expressions/ Using the microbenchmark package to compare the execution time of R expressions] | |||

* [https://cran.r-project.org/web/packages/rbenchmark/index.html rbenchmark] (not updated since 2012) | |||

== Plot, image == | |||

=== [http://cran.r-project.org/web/packages/jpeg/index.html jpeg] === | |||

If we want to create the image on this wiki left hand side panel, we can use the '''jpeg''' package to read an existing plot and then edit and save it. | |||

We can also use the jpeg package to import and manipulate a jpg image. See [http://moderndata.plot.ly/fun-with-heatmaps-and-plotly/ Fun with Heatmaps and Plotly]. | |||

=== EPS/postscript format === | |||

<ul> | |||

<li>Don't use postscript(). | |||

<li>Use cairo_ps(). See [http://www.sthda.com/english/wiki/saving-high-resolution-ggplots-how-to-preserve-semi-transparency aving High-Resolution ggplots: How to Preserve Semi-Transparency]. It works on base R plots too. | |||

<syntaxhighlight lang='r'> | |||

cairo_ps(filename = "survival-curves.eps", | |||

width = 7, height = 7, pointsize = 12, | |||

fallback_resolution = 300) | |||

print(p) # or any base R plots statements | |||

dev.off() | |||

</syntaxhighlight> | |||

<li>[https://stackoverflow.com/a/8147482 Export a graph to .eps file with R]. | |||

* The results looks the same as using cairo_ps(). | |||

* The file size by setEPS() + postscript() is quite smaller compared to using cairo_ps(). | |||

* However, '''grep''' can find the characters shown on the plot generated by cairo_ps() but not setEPS() + postscript(). | |||

<pre> | |||

setEPS() | |||

postscript("whatever.eps") # 483 KB | |||

plot(rnorm(20000)) | |||

dev.off() | |||

# grep rnorm whatever.eps # Not found! | |||

cairo_ps("whatever_cairo.eps") # 2.4 MB | |||

plot(rnorm(20000)) | |||

dev.off() | |||

# grep rnorm whatever_cairo.eps # Found! | |||

</pre> | |||

[https:// | <li> View EPS files | ||

* Linux: evince. It is installed by default. | |||

* Mac: evince. ''' brew install evince''' | |||

* Windows. Install '''ghostscript''' [https://www.npackd.org/p/com.ghostscript.Ghostscript64/9.20 9.20] (10.x does not work with ghostview/GSview) and '''ghostview/GSview''' (5.0). In Ghostview, open Options -> Advanced Configure. Change '''Ghostscript DLL''' path AND '''Ghostscript include Path''' according to the ghostscript location ("C:\. | |||

<li>Edit EPS files: Inkscape | |||

* Step 1: open the EPS file | |||

* Step 2: EPS Input: Determine page orientation from text direction 'Page by page' - OK | |||

* Step 3: PDF Import Settings: default is "Internal import", but we shall choose '''"Cairo import"'''. | |||

* Step 4: '''Zoom in''' first. | |||

* Step 5: Click on '''Layers and Objects''' tab on the RHS. Now we can select any lines or letters and edit them as we like. The selected objects are highlighted in the "Layers and Objects" panel. That is, we can select multiple objects using object names. The selected objects can be rotated (Object -> Rotate 90 CW), for example. | |||

* Step 6: We can save the plot as any formats like svg, eps, pdf, html, pdf, ... | |||

</ul> | |||

=== | === png and resolution === | ||

It seems people use '''res=300''' as a definition of high resolution. | |||

=== | <ul> | ||

<li>Bottom line: fix res=300 and adjust height/width as needed. The default is res=72, height=width=480. If we increase res=300, the text font size will be increased, lines become thicker and the plot looks like a zoom-in. | |||

<li>[https://stackoverflow.com/a/51194014 Saving high resolution plot in png]. | |||

<pre> | |||

png("heatmap.png", width = 8, height = 6, units='in', res = 300) | |||

# we can adjust width/height as we like | |||

# the pixel values will be width=8*300 and height=6*300 which is equivalent to | |||

# 8*300 * 6*300/10^6 = 4.32 Megapixels (1M pixels = 10^6 pixels) in camera's term | |||

# However, if we use png(, width=8*300, height=6*300, units='px'), it will produce | |||

# a plot with very large figure body and tiny text font size. | |||

# It seems the following command gives the same result as above | |||

png("heatmap.png", width = 8*300, height = 6*300, res = 300) # default units="px" | |||

</pre> | |||

<li>Chapter 14.5 [https://r-graphics.org/recipe-output-bitmap Outputting to Bitmap (PNG/TIFF) Files] by R Graphics Cookbook | |||

* Changing the resolution affects the size (in pixels) of graphical objects like text, lines, and points. | |||

<li>[https://blog.revolutionanalytics.com/2009/01/10-tips-for-making-your-r-graphics-look-their-best.html 10 tips for making your R graphics look their best] David Smith | |||

* In Word you can resize the graphic to an appropriate size, but the high resolution gives you the flexibility to choose a size while not compromising on the quality. I'd recommend '''at least 1200 pixels''' on the longest side for standard printers. | |||

<li>[https://stat.ethz.ch/R-manual/R-devel/library/grDevices/html/png.html ?png]. The png function has default settings ppi=72, height=480, width=480, units="px". | |||

* By default no resolution is recorded in the file, except for BMP. | |||

* [https://www.adobe.com/creativecloud/file-types/image/comparison/bmp-vs-png.html BMP vs PNG format]. If you need a smaller file size and don’t mind a lossless compression, PNG might be a better choice. If you need to retain as much detail as possible and don’t mind a larger file size, BMP could be the way to go. | |||

[ | ** '''Compression''': BMP files are raw and uncompressed, meaning they’re large files that retain as much detail as possible. On the other hand, PNG files are compressed but still lossless. This means you can reduce or expand PNGs without losing any information. | ||

</ | ** '''File size''': BMPs are larger than PNGs. This is because PNG files automatically compress, and can be compressed again to make the file even smaller. | ||

** '''Common uses''': BMP contains a maximum amount of details while PNGs are good for small illustrations, sketches, drawings, logos and icons. | |||

** '''Quality''': No difference | |||

** '''Transparency''': PNG supports transparency while BMP doesn't | |||

<li>Some comparison about the ratio | |||

* 11/8.5=1.29 (A4 paper) | |||

* 8/6=1.33 (plot output) | |||

* 1440/900=1.6 (my display) | |||

<li>[https://babichmorrowc.github.io/post/2019-05-23-highres-figures/ Setting resolution and aspect ratios in R] | |||

<li>The difference of '''res''' parameter for a simple plot. [https://www.tutorialspoint.com/how-to-change-the-resolution-of-a-plot-in-base-r How to change the resolution of a plot in base R?] | |||

<li>[https://danieljhocking.wordpress.com/2013/03/12/high-resolution-figures-in-r/ High Resolution Figures in R]. | |||

<li>[https://magesblog.com/post/2013-10-29-high-resolution-graphics-with-r/ High resolution graphics with R] | |||

<li>[https://stackoverflow.com/questions/8399100/r-plot-size-and-resolution R plot: size and resolution] | |||

<li>[https://stackoverflow.com/a/22815896 How can I increase the resolution of my plot in R?], [https://cran.r-project.org/web/packages/devEMF/index.html devEMF] package | |||

<li>See [[Images#Anti-alias_%E4%BF%AE%E9%82%8A|Images -> Anti-alias]]. | |||

<li>How to check DPI on PNG | |||

* '''The width of a PNG file in terms of inches cannot be determined directly from the file itself''', as the file contains pixel dimensions, not physical dimensions. However, '''you can calculate the width in inches if you know the resolution (DPI, dots per inch) of the image'''. Remember that converting pixel measurements to physical measurements like inches involves a specific resolution (DPI), and different devices may display the same image at different sizes due to having different resolutions. | |||

<li>[https://community.rstudio.com/t/save-high-resolution-figures-from-r-300dpi/62016/3 Cairo] case. | |||

</ul> | |||

=== | === PowerPoint === | ||

<ul> | |||

< | <li>For PP presentation, I found it is useful to use svg() to generate a small size figure. Then when we enlarge the plot, the text font size can be enlarged too. According to [https://www.rdocumentation.org/packages/grDevices/versions/3.6.2/topics/cairo svg], by default, width = 7, height = 7, pointsize = 12, family = '''sans'''. | ||

<li>Try the following code. The font size is the same for both plots/files. However, the first plot can be enlarged without losing its quality. | |||

<pre> | |||

svg("svg4.svg", width=4, height=4) | |||

plot(1:10, main="width=4, height=4") | |||

dev.off() | |||

svg("svg7.svg", width=7, height=7) # default | |||

plot(1:10, main="width=7, height=7") | |||

dev.off() | |||

</pre> | |||

</ul> | |||

</ | |||

==== | === magick === | ||

https://cran.r-project.org/web/packages/magick/ | |||

See an example [[:File:Progpreg.png|here]] I created. | |||

=== [http://cran.r-project.org/web/packages/Cairo/index.html Cairo] === | |||

See [[Heatmap#White_strips_.28artifacts.29|White strips problem]] in png() or tiff(). | |||

=== geDevices === | |||

* [https://www.jumpingrivers.com/blog/r-graphics-cairo-png-pdf-saving/ Saving R Graphics across OSs]. Use png(type="cairo-png") or the [https://cran.r-project.org/web/packages/ragg/index.html ragg] package which can be incorporated into RStudio. | |||

* [https://www.jumpingrivers.com/blog/r-knitr-markdown-png-pdf-graphics/ Setting the Graphics Device in a RMarkdown Document] | |||

=== [https://cran.r-project.org/web/packages/cairoDevice/ cairoDevice] === | |||

PS. Not sure the advantage of functions in this package compared to R's functions (eg. Cairo_svg() vs svg()). | |||

For ubuntu OS, we need to install 2 libraries and 1 R package '''RGtk2'''. | |||

<pre> | |||

sudo apt-get install libgtk2.0-dev libcairo2-dev | |||

</pre> | |||

On Windows OS, we may got the error: '''unable to load shared object 'C:/Program Files/R/R-3.0.2/library/cairoDevice/libs/x64/cairoDevice.dll' '''. We need to follow the instruction in [http://tolstoy.newcastle.edu.au/R/e6/help/09/05/15613.html here]. | |||

==== [http:// | === dpi requirement for publication === | ||

[http://www.cookbook-r.com/Graphs/Output_to_a_file/ For import into PDF-incapable programs (MS Office)] | |||

=== sketcher: photo to sketch effects === | |||

https://htsuda.net/sketcher/ | |||

==== | === httpgd === | ||

* https://nx10.github.io/httpgd/ A graphics device for R that is accessible via network protocols. Display graphics on browsers. | |||

* [https://youtu.be/uxyhmhRVOfw Three tricks to make IDEs other than Rstudio better for R development] | |||

== [http://igraph.org/r/ igraph] == | |||

[[R_web#igraph|R web -> igraph]] | |||

==== | == Identifying dependencies of R functions and scripts == | ||

https://stackoverflow.com/questions/8761857/identifying-dependencies-of-r-functions-and-scripts | |||

{{Pre}} | |||

library(mvbutils) | |||

foodweb(where = "package:batr") | |||

== | foodweb( find.funs("package:batr"), prune="survRiskPredict", lwd=2) | ||

foodweb( find.funs("package:batr"), prune="classPredict", lwd=2) | |||

</pre> | |||

== | == [http://cran.r-project.org/web/packages/iterators/ iterators] == | ||

Iterator is useful over for-loop if the data is already a '''collection'''. It can be used to iterate over a vector, data frame, matrix, file | |||

Iterator can be combined to use with foreach package http://www.exegetic.biz/blog/2013/11/iterators-in-r/ has more elaboration. | |||

== | == Colors == | ||

[https:// | * [https://scales.r-lib.org/ scales] package. This is used in ggplot2 package. | ||

< | <ul> | ||

<li>[https://cran.r-project.org/web/packages/colorspace/index.html colorspace]: A Toolbox for Manipulating and Assessing Colors and Palettes. Popular! Many reverse imports/suggests; e.g. ComplexHeatmap. See my [[Ggplot2#colorspace_package|ggplot2]] page. | |||

<pre> | |||

</ | hcl_palettes(plot = TRUE) # a quick overview | ||

hcl_palettes(palette = "Dark 2", n=5, plot = T) | |||

< | q4 <- qualitative_hcl(4, palette = "Dark 3") | ||

</pre> | |||

</ul> | |||

* [https://statisticsglobe.com/create-color-range-between-two-colors-in-r Create color range between two colors in R] using colorRampPalette() | |||

* [http://novyden.blogspot.com/2013/09/how-to-expand-color-palette-with-ggplot.html How to expand color palette with ggplot and RColorBrewer] | |||

* palette_explorer() function from the [https://cran.r-project.org/web/packages/tmaptools/index.html tmaptools] package. See [https://www.computerworld.com/article/3184778/data-analytics/6-useful-r-functions-you-might-not-know.html selecting color palettes with shiny]. | |||

* [http://www.cookbook-r.com/ Cookbook for R] | |||

* [http://ggplot2.tidyverse.org/reference/scale_brewer.html Sequential, diverging and qualitative colour scales/palettes from colorbrewer.org]: scale_colour_brewer(), scale_fill_brewer(), ... | |||

* http://colorbrewer2.org/ | |||

* It seems there is no choice of getting only 2 colors no matter which set name we can use | |||

* To see the set names used in brewer.pal, see | |||

** [https://www.rdocumentation.org/packages/RColorBrewer/versions/1.1-2/topics/RColorBrewer RColorBrewer::display.brewer.all()] | |||

** [https://rpubs.com/flowertear/224344 Output] | |||

** Especially, '''[http://colorbrewer2.org/#type=qualitative&scheme=Set1&n=4 Set1]''' from http://colorbrewer2.org/ | |||

* To list all R color names, colors(). | |||

** [http://research.stowers.org/mcm/efg/R/Color/Chart/ColorChart.pdf Color Chart] (include Hex and RGB) & [http://research.stowers.org/mcm/efg/Report/UsingColorInR.pdf Using Color in R] from http://research.stowers.org | |||

** Code to generate rectangles with colored background https://www.r-graph-gallery.com/42-colors-names/ | |||

* http://www.bauer.uh.edu/parks/truecolor.htm Interactive RGB, Alpha and Color Picker | |||

* http://deanattali.com/blog/colourpicker-package/ Not sure what it is doing | |||

* [http://www.lifehack.org/484519/how-to-choose-the-best-colors-for-your-data-charts How to Choose the Best Colors For Your Data Charts] | |||

* [http://novyden.blogspot.com/2013/09/how-to-expand-color-palette-with-ggplot.html How to expand color palette with ggplot and RColorBrewer] | |||

* [http://sape.inf.usi.ch/quick-reference/ggplot2/colour Color names in R] | |||

<ul> | |||

<li>[https://stackoverflow.com/questions/28461326/convert-hex-color-code-to-color-name convert hex value to color names] | |||

{{Pre}} | |||

library(plotrix) | |||

sapply(rainbow(4), color.id) # color.id is a function | |||

# it is used to identify closest match to a color | |||

sapply(palette(), color.id) | |||

sapply(RColorBrewer::brewer.pal(4, "Set1"), color.id) | |||

</pre> | |||

</li></ul> | |||

* [https://www.rdocumentation.org/packages/grDevices/versions/3.5.3/topics/hsv hsv()] function. [https://eranraviv.com/matrix-style-screensaver-in-r/ Matrix-style screensaver in R] | |||

Below is an example using the option ''scale_fill_brewer''(palette = "[http://colorbrewer2.org/#type=qualitative&scheme=Paired&n=9 Paired]"). See the source code at [https://gist.github.com/JohannesFriedrich/c7d80b4e47b3331681cab8e9e7a46e17 gist]. Note that only '''set1''' and '''set3''' palettes in '''qualitative scheme''' can support up to 12 classes. | |||

# | |||

According to the information from the colorbrew website, '''qualitative''' schemes do not imply magnitude differences between legend classes, and hues are used to create the primary visual differences between classes. | |||

[[:File:GgplotPalette.svg]] | |||

[ | |||

=== | === [http://rpubs.com/gaston/colortools colortools] === | ||

Tools that allow users generate color schemes and palettes | |||

== | === [https://github.com/daattali/colourpicker colourpicker] === | ||

A Colour Picker Tool for Shiny and for Selecting Colours in Plots | |||

=== eyedroppeR === | |||

[http://gradientdescending.com/select-colours-from-an-image-in-r-with-eyedropper/ Select colours from an image in R with {eyedroppeR}] | |||

== [https://github.com/kevinushey/rex rex] == | |||

Friendly Regular Expressions | |||

== [http://cran.r-project.org/web/packages/formatR/index.html formatR] == | |||

'''The best strategy to avoid failure is to put comments in complete lines or after complete R expressions.''' | |||

See also [http://stackoverflow.com/questions/3017877/tool-to-auto-format-r-code this discussion] on stackoverflow talks about R code reformatting. | |||

<pre> | |||

library(formatR) | |||

tidy_source("Input.R", file = "output.R", width.cutoff=70) | |||

tidy_source("clipboard") | |||

# default width is getOption("width") which is 127 in my case. | |||

</pre> | </pre> | ||

Some issues | |||

* Comments appearing at the beginning of a line within a long complete statement. This will break tidy_source(). | |||

<pre> | <pre> | ||

cat("abcd", | |||

# This is my comment | |||

"defg") | |||

</pre> | </pre> | ||

will result in | |||

<pre> | <pre> | ||

> tidy_source("clipboard") | |||

Error in base::parse(text = code, srcfile = NULL) : | |||

3:1: unexpected string constant | |||

2: invisible(".BeGiN_TiDy_IdEnTiFiEr_HaHaHa# This is my comment.HaHaHa_EnD_TiDy_IdEnTiFiEr") | |||

3: "defg" | |||

^ | |||

</pre> | </pre> | ||

* Comments appearing at the end of a line within a long complete statement ''won't break'' tidy_source() but tidy_source() cannot re-locate/tidy the comma sign. | |||

<pre> | <pre> | ||

cat("abcd" | |||

,"defg" # This is my comment | |||

,"ghij") | |||

( | |||

# | |||

</pre> | </pre> | ||

will become | |||

<pre> | <pre> | ||

cat("abcd", "defg" # This is my comment | |||

, "ghij") | |||

</pre> | </pre> | ||

Still bad!! | |||

* Comments appearing at the end of a line within a long complete statement ''breaks'' tidy_source() function. For example, | |||

<pre> | <pre> | ||

cat("</p>", | |||

"<HR SIZE=5 WIDTH=\"100%\" NOSHADE>", | |||

ifelse(codeSurv == 0,"<h3><a name='Genes'><b><u>Genes which are differentially expressed among classes:</u></b></a></h3>", #4/9/09 | |||

"<h3><a name='Genes'><b><u>Genes significantly associated with survival:</u></b></a></h3>"), | |||

file=ExternalFileName, sep="\n", append=T) | |||

</pre> | </pre> | ||

will result in | |||

<pre> | |||

> tidy_source("clipboard", width.cutoff=70) | |||

Error in base::parse(text = code, srcfile = NULL) : | |||

3:129: unexpected SPECIAL | |||

2: "<HR SIZE=5 WIDTH=\"100%\" NOSHADE>" , | |||

3: ifelse ( codeSurv == 0 , "<h3><a name='Genes'><b><u>Genes which are differentially expressed among classes:</u></b></a></h3>" , %InLiNe_IdEnTiFiEr% | |||

</pre> | |||

* ''width.cutoff'' parameter is not always working. For example, there is no any change for the following snippet though I hope it will move the cat() to the next line. | |||

<pre> | |||

if (codePF & !GlobalTest & !DoExactPermTest) cat(paste("Multivariate Permutations test was computed based on", | |||

NumPermutations, "random permutations"), "<BR>", " ", file = ExternalFileName, | |||

sep = "\n", append = T) | |||

</pre> | |||

* It merges lines though I don't always want to do that. For example | |||

<pre> | <pre> | ||

cat("abcd" | |||

,"defg" | |||

,"ghij") | |||

</pre> | </pre> | ||

will become | |||

<pre> | <pre> | ||

cat("abcd", "defg", "ghij") | |||

</pre> | </pre> | ||

== styler == | |||

https://cran.r-project.org/web/packages/styler/index.html Pretty-prints R code without changing the user's formatting intent. | |||

== Download papers == | |||

=== [http://cran.r-project.org/web/packages/biorxivr/index.html biorxivr] === | |||

Search and Download Papers from the bioRxiv Preprint Server (biology) | |||

=== [http://cran.r-project.org/web/packages/aRxiv/index.html aRxiv] === | |||

Interface to the arXiv API | |||

=== | === [https://cran.r-project.org/web/packages/pdftools/index.html pdftools] === | ||

* http://ropensci.org/blog/2016/03/01/pdftools-and-jeroen | |||

* http://r-posts.com/how-to-extract-data-from-a-pdf-file-with-r/ | |||

* https://ropensci.org/technotes/2018/12/14/pdftools-20/ | |||

== [https://github.com/ColinFay/aside aside]: set it aside == | |||

An RStudio addin to run long R commands aside your current session. | |||

== Teaching == | |||

* [https://cran.r-project.org/web/packages/smovie/vignettes/smovie-vignette.html smovie]: Some Movies to Illustrate Concepts in Statistics | |||

== Organize R research project == | |||

* [https://cran.r-project.org/web/views/ReproducibleResearch.html CRAN Task View: Reproducible Research] | |||

* [https://ntguardian.wordpress.com/2019/02/04/organizing-r-research-projects-cpat-case-study/ Organizing R Research Projects: CPAT, A Case Study] | |||

* [https://www.tidyverse.org/articles/2017/12/workflow-vs-script/ Project-oriented workflow]. It suggests the [https://github.com/r-lib/here here] package. Don't use '''setwd()''' and '''rm(list = ls())'''. | |||

** [https://rstats.wtf/safe-paths.html Practice safe paths]. Use projects and the [https://cran.r-project.org/web/packages/here/index.html here] package. | |||

** In RStudio, if we try to send a few lines of code and one of the line contains '''setwd()''', it will give a message: ''The working directory was changed to XXX inside a notebook chunk. The working directory will be reset when the chunk is finished running. Use the knitr root.dir option in the setup chunk to change the working directory for notebook chunks.'' | |||

** [http://jenrichmond.rbind.io/post/how-to-use-the-here-package/ how to use the `here` package] | |||

** No update for the ''here'' package after 2020-12. Consider [https://github.com/r-lib/usethis usethis] package (Automate project and package setup). | |||

* drake project | |||

** [https://ropensci.org/blog/2018/02/06/drake/ The prequel to the drake R package] | |||

** [https://ropenscilabs.github.io/drake-manual/index.html The drake R Package User Manual] | |||

* [https://docs.ropensci.org/targets/ targets] package | |||

* [http://projecttemplate.net/ ProjectTemplate] | |||

=== | === How to save (and load) datasets in R (.RData vs .Rds file) === | ||

[https://rcrastinate.rbind.io/post/how-to-save-and-load-data-in-r-an-overview/ How to save (and load) datasets in R: An overview] | |||

=== | === Naming convention === | ||

<ul> | |||

<li>[https://stackoverflow.com/a/1946879 What is your preferred style for naming variables in R?] | |||

* Use of period separator: they can get mixed up in simple method dispatch. However, it is used by base R ([https://www.rdocumentation.org/packages/base/versions/3.6.2/topics/make.names make.names()], read.table(), et al) | |||

* Use of underscores: really annoying for ESS users | |||

* '''camelCase''': Winner | |||

<li>However, the [https://stackoverflow.com/a/13413278 survey] said (no surprises perhaps) that | |||

* '''lowerCamelCase''' was most often used for function names, | |||

* '''period.separated''' names most often used for parameters. | |||

<li>[https://datamanagement.hms.harvard.edu/collect/file-naming-conventions What are file naming conventions?] | |||

<li>[https://www.r-bloggers.com/2014/07/consistent-naming-conventions-in-r/ Consistent naming conventions in R] | |||

<li>http://adv-r.had.co.nz/Style.html | |||

<li>[https://www.r-bloggers.com/2011/07/testing-for-valid-variable-names/ Testing for valid variable names] | |||

<li>R reserved words ?Reserved | |||

* [https://www.datamentor.io/r-programming/reserved-words/ R Reserved Words] | |||

* Among these words, if, else, repeat, while, function, for, '''in''', next and break are used for conditions, loops and user defined functions. | |||

<li>Microarray/RNA-seq data | |||

<pre> | <pre> | ||

clinicalDesignData # clnDesignData | |||

geneExpressionData # gExpData | |||

geneAnnotationData # gAnnoData | |||

dataClinicalDesign | |||

dataGeneExpression | |||

dataAnnotation | |||

</pre> | </pre> | ||

<pre> | <pre> | ||

# Search all variables ending with .Data | |||

ls()[grep("\\.Data$", ls())] | |||

# | # Search all variables starting with data_ | ||

ls()[grep("^data_", ls())] | |||

</pre> | </pre> | ||

</ul> | |||

=== Efficient Data Management in R === | |||

[https://www.mzes.uni-mannheim.de/socialsciencedatalab/article/efficient-data-r/ Efficient Data Management in R]. .Rprofile, renv package and dplyr package. | |||

== | == Text to speech == | ||

[https://shirinsplayground.netlify.com/2018/06/googlelanguager/ Text-to-Speech with the googleLanguageR package] | |||

== Speech to text == | |||

https://github.com/ggerganov/whisper.cpp and an R package [https://github.com/bnosac/audio.whisper audio.whisper] | |||

* | == Weather data == | ||

* [https://github.com/ropensci/prism prism] package | |||

* [http://www.weatherbase.com/weather/weather.php3?s=507781&cityname=Rockville-Maryland-United-States-of-America Weatherbase] | |||

== logR == | |||

https://github.com/jangorecki/logR | |||

== Progress bar == | |||

https://github.com/r-lib/progress#readme | |||

Configurable Progress bars, they may include percentage, elapsed time, and/or the estimated completion time. They work in terminals, in 'Emacs' 'ESS', 'RStudio', 'Windows' 'Rgui' and the 'macOS'. | |||

== cron == | |||

* [https://github.com/bnosac/cronr cronR] | |||

* [https://mathewanalytics.com/building-a-simple-pipeline-in-r/ Building a Simple Pipeline in R] | |||

== | == beepr: Play A Short Sound == | ||

https://www.rdocumentation.org/packages/beepr/versions/1.3/topics/beep. Try sound=3 "fanfare", 4 "complete", 5 "treasure", 7 "shotgun", 8 "mario". | |||

== utils package == | |||

https://www.rdocumentation.org/packages/utils/versions/3.6.2 | |||

== | == tools package == | ||

* | * https://www.rdocumentation.org/packages/tools/versions/3.6.2 | ||

* [https://www.r-bloggers.com/2023/08/three-four-r-functions-i-enjoyed-this-week/ Where in the file are there non ASCII characters?], [https://rdocumentation.org/packages/tools/versions/3.6.2/topics/showNonASCII tools::showNonASCIIfile(<filename>)] | |||

* https://www.r-bloggers.com/ | |||

= | = Different ways of using R = | ||

[https://www.amazon.com/Extending-Chapman-Hall-John-Chambers/dp/1498775713 Extending R] by John M. Chambers (2016) | |||

=== | == 10 things R can do that might surprise you == | ||

https://simplystatistics.org/2019/03/13/10-things-r-can-do-that-might-surprise-you/ | |||

== | == R call C/C++ == | ||

Mainly talks about .C() and .Call(). | |||

Note that scalars and arrays must be passed using pointers. So if we want to access a function not exported from a package, we may need to modify the function to make the arguments as pointers. | |||

* [http://cran.r-project.org/doc/manuals/R-exts.html R-Extension manual] of course. | |||

* [http://r-pkgs.had.co.nz/src.html Compiled Code] chapter from 'R Packages' by Hadley Wickham | |||

* http://faculty.washington.edu/kenrice/sisg-adv/sisg-07.pdf | |||

* http://www.stat.berkeley.edu/scf/paciorek-cppWorkshop.pdf (Very useful) | |||

* http://www.stat.harvard.edu/ccr2005/ | |||

* http://mazamascience.com/WorkingWithData/?p=1099 | |||

* [https://youtube.com/playlist?list=PLwc48KSH3D1OkObQ22NHbFwEzof2CguJJ Make an R package with C++ code] (a playlist from youtube) | |||

* [https://working-with-data.mazamascience.com/2021/07/16/using-r-calling-c-code-hello-world/ Using R – Calling C code ‘Hello World!’] | |||

* [http://www.haowulab.org//pages/computing.html Computing tip] by Hao Wu | |||

== | === .Call === | ||

https:// | * [https://www.rdocumentation.org/packages/base/versions/3.6.2/topics/CallExternal ?.Call] | ||

* [http://mazamascience.com/WorkingWithData/?p=1099 Using R — .Call(“hello”)] | |||

* | * http://adv-r.had.co.nz/C-interface.html | ||

* | * [https://working-with-data.mazamascience.com/2021/07/16/using-r-callhello/ Using R – .Call(“hello”)] | ||

== | Be sure to add the ''PACKAGE'' parameter to avoid an error like | ||

<pre> | |||

cvfit <- cv.grpsurvOverlap(X, Surv(time, event), group, | |||

cv.ind = cv.ind, seed=1, penalty = 'cMCP') | |||

Error in .Call("standardize", X) : | |||

"standardize" not resolved from current namespace (grpreg) | |||

</pre> | |||

=== NAMESPACE file & useDynLib === | |||

* https://cran.r-project.org/doc/manuals/r-release/R-exts.html#useDynLib | |||

* We don't need to include double quotes around the C/Fortran subroutines in .C() or .Fortran() | |||

* digest package example: [https://github.com/cran/digest/blob/master/NAMESPACE NAMESPACE] and [https://github.com/cran/digest/blob/master/R/digest.R R functions] using .Call(). | |||

* stats example: [https://github.com/wch/r-source/blob/trunk/src/library/stats/NAMESPACE NAMESPACE] | |||

(From [https://cran.r-project.org/doc/manuals/r-release/R-exts.html#dyn_002eload-and-dyn_002eunload Writing R Extensions manual]) Loading is most often done automatically based on the '''useDynLib()''' declaration in the '''NAMESPACE''' file, but may be done explicitly via a call to '''library.dynam()'''. This has the form | |||

{{Pre}} | |||

library.dynam("libname", package, lib.loc) | |||

library | |||

</pre> | </pre> | ||

=== library.dynam.unload() === | |||

* https://stat.ethz.ch/R-manual/R-devel/library/base/html/library.dynam.html | |||

* http://r-pkgs.had.co.nz/src.html. The '''library.dynam.unload()''' function should be placed in '''.onUnload()''' function. This function can be saved in any R files. | |||

* digest package example [https://github.com/cran/digest/blob/master/R/zzz.R zzz.R] | |||

=== | === gcc === | ||

[http://rorynolan.rbind.io/2019/06/30/strexgcc/ Coping with varying `gcc` versions and capabilities in R packages] | |||

=== Primitive functions === | |||

[https://nathaneastwood.github.io/2020/02/01/primitive-functions-list/ Primitive Functions List] | |||

== SEXP == | |||

Some examples from packages | |||

* [https://www.bioconductor.org/packages/release/bioc/html/sva.html sva] package has one C code function | |||

The | == R call Fortran == | ||

* [https://stat.ethz.ch/pipermail/r-devel/2015-March/070851.html R call Fortran 90] | |||

* [https://www.r-bloggers.com/the-need-for-speed-part-1-building-an-r-package-with-fortran-or-c/ The Need for Speed Part 1: Building an R Package with Fortran (or C)] (Very detailed) | |||

== Embedding R == | |||

* See [http://cran.r-project.org/doc/manuals/R-exts.html#Linking-GUIs-and-other-front_002dends-to-R Writing for R Extensions] Manual Chapter 8. | |||

* [http://www.ci.tuwien.ac.at/Conferences/useR-2004/abstracts/supplements/Urbanek.pdf Talk by Simon Urbanek] in UseR 2004. | |||

* [http://epub.ub.uni-muenchen.de/2085/1/tr012.pdf Technical report] by Friedrich Leisch in 2007. | |||

* https://stat.ethz.ch/pipermail/r-help/attachments/20110729/b7d86ed7/attachment.pl | |||

=== An very simple example (do not return from shell) from Writing R Extensions manual === | |||

The command-line R front-end, R_HOME/bin/exec/R, is one such example. Its source code is in file <src/main/Rmain.c>. | |||

This example can be run by | |||

<pre>R_HOME/bin/R CMD R_HOME/bin/exec/R</pre> | |||

</ | |||

Note: | |||

# '''R_HOME/bin/exec/R''' is the R binary. However, it couldn't be launched directly unless R_HOME and LD_LIBRARY_PATH are set up. Again, this is explained in Writing R Extension manual. | |||

# '''R_HOME/bin/R''' is a shell-script front-end where users can invoke it. It sets up the environment for the executable. It can be copied to ''/usr/local/bin/R''. When we run ''R_HOME/bin/R'', it actually runs ''R_HOME/bin/R CMD R_HOME/bin/exec/R'' (see line 259 of ''R_HOME/bin/R'' as in R 3.0.2) so we know the important role of ''R_HOME/bin/exec/R''. | |||

More examples of embedding can be found in ''tests/Embedding'' directory. Read <index.html> for more information about these test examples. | |||

=== [http://cran.r-project.org/ | === An example from Bioconductor workshop === | ||

* What is covered in this section is different from [[R#Create_a_standalone_Rmath_library|Create and use a standalone Rmath library]]. | |||

* Use eval() function. See R-Ext [http://cran.r-project.org/doc/manuals/R-exts.html#Embedding-R-under-Unix_002dalikes 8.1] and [http://cran.r-project.org/doc/manuals/R-exts.html#Embedding-R-under-Windows 8.2] and [http://cran.r-project.org/doc/manuals/R-exts.html#Evaluating-R-expressions-from-C 5.11]. | |||

* http://stackoverflow.com/questions/2463437/r-from-c-simplest-possible-helloworld (obtained from searching R_tryEval on google) | |||

* http://stackoverflow.com/questions/7457635/calling-r-function-from-c | |||

Example: | |||

Create [https://gist.github.com/arraytools/7d32d92fee88ffc029365d178bc09e75#file-embed-c embed.c] file. | |||

Then build the executable. Note that I don't need to create R_HOME variable. | |||

<pre> | |||

cd | |||

tar xzvf | |||

cd R-3.0.1 | |||

./configure --enable-R-shlib | |||

make | |||

cd tests/Embedding | |||

make | |||

~/R-3.0.1/bin/R CMD ./Rtest | |||

nano embed.c | |||

# Using a single line will give an error and cannot not show the real problem. | |||

# ../../bin/R CMD gcc -I../../include -L../../lib -lR embed.c | |||

# A better way is to run compile and link separately | |||

gcc -I../../include -c embed.c | |||

gcc -o embed embed.o -L../../lib -lR -lRblas | |||

../../bin/R CMD ./embed | |||

</pre> | |||

Note that if we want to call the executable file ./embed directly, we shall set up R environment by specifying '''R_HOME''' variable and including the directories used in linking R in '''LD_LIBRARY_PATH'''. This is based on the inform provided by [http://cran.r-project.org/doc/manuals/r-devel/R-exts.html Writing R Extensions]. | |||

<pre> | |||

export R_HOME=/home/brb/Downloads/R-3.0.2 | |||

export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:/home/brb/Downloads/R-3.0.2/lib | |||

./embed # No need to include R CMD in front. | |||

</pre> | |||

Question: Create a data frame in C? Answer: [https://stat.ethz.ch/pipermail/r-devel/2013-August/067107.html Use data.frame() via an eval() call from C]. Or see the code is stats/src/model.c, as part of model.frame.default. Or using Rcpp as [https://stat.ethz.ch/pipermail/r-devel/2013-August/067109.html here]. | |||

Reference http://bioconductor.org/help/course-materials/2012/Seattle-Oct-2012/AdvancedR.pdf | |||

=== Create a Simple Socket Server in R === | |||

This example is coming from this [http://epub.ub.uni-muenchen.de/2085/1/tr012.pdf paper]. | |||

Create an R function | |||

<pre> | |||

simpleServer <- function(port=6543) | |||

{ | |||

sock <- socketConnection ( port=port , server=TRUE) | |||

on.exit(close( sock )) | |||

cat("\nWelcome to R!\nR>" ,file=sock ) | |||

while(( line <- readLines ( sock , n=1)) != "quit") | |||

{ | |||

cat(paste("socket >" , line , "\n")) | |||

out<- capture.output (try(eval(parse(text=line )))) | |||

writeLines ( out , con=sock ) | |||

cat("\nR> " ,file =sock ) | |||

} | |||

} | |||

</pre> | |||

Then run simpleServer(). Open another terminal and try to communicate with the server | |||

<pre> | |||

$ telnet localhost 6543 | |||

Trying 127.0.0.1... | |||

Connected to localhost. | |||

Escape character is '^]'. | |||

Welcome to R! | |||

R> summary(iris[, 3:5]) | |||

Petal.Length Petal.Width Species | |||

Min. :1.000 Min. :0.100 setosa :50 | |||

1st Qu.:1.600 1st Qu.:0.300 versicolor:50 | |||

Median :4.350 Median :1.300 virginica :50 | |||

Mean :3.758 Mean :1.199 | |||

3rd Qu.:5.100 3rd Qu.:1.800 | |||

Max. :6.900 Max. :2.500 | |||

R> quit | |||

Connection closed by foreign host. | |||

</pre> | |||

=== [http://www.rforge.net/Rserve/doc.html Rserve] === | |||

Note the way of launching Rserve is like the way we launch C program when R was embedded in C. See [[R#An_example_from_Bioconductor_workshop|Example from Bioconductor workshop]]. | |||

See my [[Rserve]] page. | |||

=== outsider === | |||

* [https://joss.theoj.org/papers/10.21105/joss.02038 outsider]: Install and run programs, outside of R, inside of R | |||

* [https://github.com/stephenturner/om..bcftools Run bcftools with outsider in R] | |||

=== (Commercial) [http://www.statconn.com/ StatconnDcom] === | |||

=== [http://rdotnet.codeplex.com/ R.NET] === | |||

=== [https://cran.r-project.org/web/packages/rJava/index.html rJava] === | |||

* [https://jozefhajnala.gitlab.io/r/r901-primer-java-from-r-1/ A primer in using Java from R - part 1] | |||

* Note rJava is needed by [https://cran.r-project.org/web/packages/xlsx/index.html xlsx] package. | |||

Terminal | |||

* | {{Pre}} | ||

* | # jdk 7 | ||

< | sudo apt-get install openjdk-7-* | ||

update-alternatives --config java | |||

# oracle jdk 8 | |||

sudo add-apt-repository -y ppa:webupd8team/java | |||

sudo apt-get update | |||

echo debconf shared/accepted-oracle-license-v1-1 select true | sudo debconf-set-selections | |||

echo debconf shared/accepted-oracle-license-v1-1 seen true | sudo debconf-set-selections | |||

sudo apt-get -y install openjdk-8-jdk | |||

</pre> | |||